之前嘗試了從0到1復現斯坦福羊駝(Stanford Alpaca 7B),Stanford Alpaca 是在 LLaMA 整個模型上微調,即對預訓練模型中的所有參數都進行微調(full fine-tuning)。但該方法對于硬件成本要求仍然偏高且訓練低效。

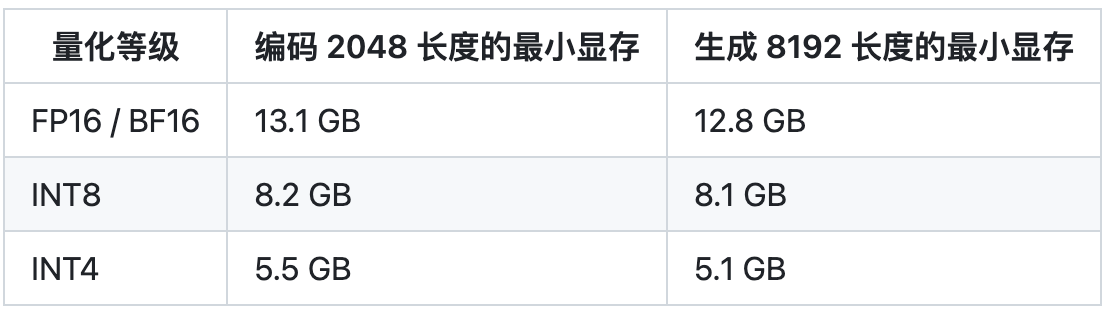

因此, Alpaca-Lora 則是利用 Lora 技術,在凍結原模型 LLaMA 參數的情況下,通過往模型中加入額外的網絡層,并只訓練這些新增的網絡層參數。由于這些新增參數數量較少,這樣不僅微調的成本顯著下降(使用一塊 RTX 4090 顯卡,只用 5 個小時就訓練了一個與 Alpaca 水平相當的模型,將這類模型對算力的需求降到了消費級),還能獲得和全模型微調(full fine-tuning)類似的效果。

LoRA 技術原理

image.png

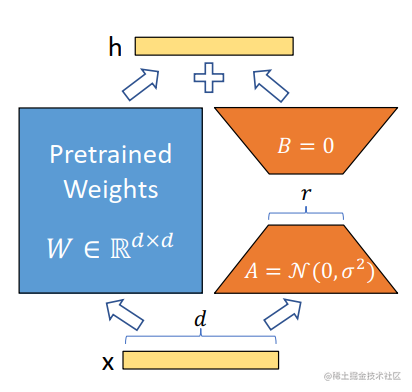

image.pngLoRA 的原理其實并不復雜,它的核心思想是在原始預訓練語言模型旁邊增加一個旁路,做一個降維再升維的操作,來模擬所謂的 intrinsic rank(預訓練模型在各類下游任務上泛化的過程其實就是在優化各類任務的公共低維本征(low-dimensional intrinsic)子空間中非常少量的幾個自由參數)。訓練的時候固定預訓練語言模型的參數,只訓練降維矩陣 A 與升維矩陣 B。而模型的輸入輸出維度不變,輸出時將 BA 與預訓練語言模型的參數疊加。用隨機高斯分布初始化 A,用 0 矩陣初始化 B。這樣能保證訓練開始時,新增的通路BA=0從,而對模型結果沒有影響。

在推理時,將左右兩部分的結果加到一起即可,h=Wx+BAx=(W+BA)x,所以,只要將訓練完成的矩陣乘積BA跟原本的權重矩陣W加到一起作為新權重參數替換原始預訓練語言模型的W即可,不會增加額外的計算資源。

LoRA 的最大優勢是速度更快,使用的內存更少;因此,可以在消費級硬件上運行。

下面,我們來嘗試使用Alpaca-Lora進行參數高效模型微調。

環境搭建

基礎環境配置如下:

- 操作系統: CentOS 7

- CPUs: 單個節點具有 1TB 內存的 Intel CPU,物理CPU個數為64,每顆CPU核數為16

- GPUs: 8 卡 A800 80GB GPUs

- Python: 3.10 (需要先升級OpenSSL到1.1.1t版本(點擊下載OpenSSL),然后再編譯安裝Python),點擊下載Python

- NVIDIA驅動程序版本: 515.65.01,根據不同型號選擇不同的驅動程序,點擊下載。

- CUDA工具包: 11.7,點擊下載

- NCCL: nccl_2.14.3-1+cuda11.7,點擊下載

- cuDNN: 8.8.1.3_cuda11,點擊下載

上面的NVIDIA驅動、CUDA、Python等工具的安裝就不一一贅述了。

創建虛擬環境并激活虛擬環境alpara-lora-venv-py310-cu117:

cd /home/guodong.li/virtual-venv

virtualenv -p /usr/bin/python3.10 alpara-lora-venv-py310-cu117

source /home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/bin/activate

離線安裝PyTorch,點擊下載對應cuda版本的torch和torchvision即可。

pip install torch-1.13.1+cu117-cp310-cp310-linux_x86_64.whl

pip install pip install torchvision-0.14.1+cu117-cp310-cp310-linux_x86_64.whl

安裝transformers,目前,LLaMA相關的實現并沒有發布對應的版本,但是已經合并到主分支了,因此,我們需要切換到對應的commit,從源代碼進行相應的安裝。

cd transformers

git checkout 0041be5

pip install .

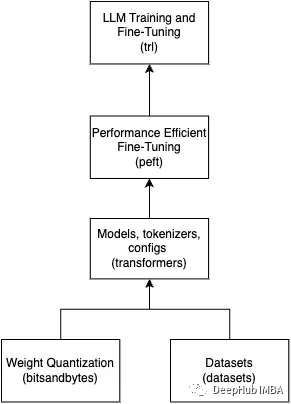

在 Alpaca-LoRA 項目中,作者提到,為了廉價高效地進行微調,他們使用了 Hugging Face 的 PEFT。PEFT 是一個庫(LoRA 是其支持的技術之一,除此之外還有Prefix Tuning、P-Tuning、Prompt Tuning),可以讓你使用各種基于 Transformer 結構的語言模型進行高效微調。下面安裝PEFT。

git clone https://github.com/huggingface/peft.git

cd peft/

git checkout e536616

pip install .

安裝bitsandbytes。

git clone git@github.com:TimDettmers/bitsandbytes.git

cd bitsandbytes

CUDA_VERSION=117 make cuda11x

python setup.py install

安裝其他相關的庫。

cd alpaca-lora

pip install -r requirements.txt

requirements.txt文件具體的內容如下:

accelerate

appdirs

loralib

black

black[jupyter]

datasets

fire

sentencepiece

gradio

模型格式轉換

將LLaMA原始權重文件轉換為Transformers庫對應的模型文件格式。具體可參考之前的文章:從0到1復現斯坦福羊駝(Stanford Alpaca 7B) 。如果不想轉換LLaMA模型,也可以直接從Hugging Face下載轉換好的模型。

模型微調

訓練的默認值如下所示:

batch_size: 128

micro_batch_size: 4

num_epochs: 3

learning_rate: 0.0003

cutoff_len: 256

val_set_size: 2000

lora_r: 8

lora_alpha: 16

lora_dropout: 0.05

lora_target_modules: ['q_proj', 'v_proj']

train_on_inputs: True

group_by_length: False

wandb_project:

wandb_run_name:

wandb_watch:

wandb_log_model:

resume_from_checkpoint: False

prompt template: alpaca

使用默認參數,單卡訓練完成大約需要5個小時,且對于GPU顯存的消耗確實很低。

1%|█▌ | 12/1170 [03:21<545, 16.83s/it]

本文為了加快訓練速度,將batch_size和micro_batch_size調大并將num_epochs調小了。

python finetune.py

--base_model '/data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b'

--data_path '/data/nfs/guodong.li/data/alpaca_data_cleaned.json'

--output_dir '/home/guodong.li/output/lora-alpaca'

--batch_size 256

--micro_batch_size 16

--num_epochs 2

當然也可以根據需要微調超參數,參考示例如下:

python finetune.py

--base_model 'decapoda-research/llama-7b-hf'

--data_path 'yahma/alpaca-cleaned'

--output_dir './lora-alpaca'

--batch_size 128

--micro_batch_size 4

--num_epochs 3

--learning_rate 1e-4

--cutoff_len 512

--val_set_size 2000

--lora_r 8

--lora_alpha 16

--lora_dropout 0.05

--lora_target_modules '[q_proj,v_proj]'

--train_on_inputs

--group_by_length

運行過程:

python finetune.py

> --base_model '/data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b'

> --data_path '/data/nfs/guodong.li/data/alpaca_data_cleaned.json'

> --output_dir '/home/guodong.li/output/lora-alpaca'

> --batch_size 256

> --micro_batch_size 16

> --num_epochs 2

===================================BUG REPORT===================================

Welcome to bitsandbytes. For bug reports, please submit your error trace to: https://github.com/TimDettmers/bitsandbytes/issues

================================================================================

/home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/bitsandbytes-0.37.2-py3.10.egg/bitsandbytes/cuda_setup/main.py UserWarning: WARNING: The following directories listed in your path were found to be non-existent: {PosixPath('/opt/rh/devtoolset-9/root/usr/lib/dyninst'), PosixPath('/opt/rh/devtoolset-7/root/usr/lib/dyninst')}

warn(msg)

CUDA SETUP: CUDA runtime path found: /usr/local/cuda-11.7/lib64/libcudart.so

CUDA SETUP: Highest compute capability among GPUs detected: 8.0

CUDA SETUP: Detected CUDA version 117

CUDA SETUP: Loading binary /home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/bitsandbytes-0.37.2-py3.10.egg/bitsandbytes/libbitsandbytes_cuda117.so...

Training Alpaca-LoRA model with params:

base_model: /data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b

data_path: /data/nfs/guodong.li/data/alpaca_data_cleaned.json

output_dir: /home/guodong.li/output/lora-alpaca

batch_size: 256

micro_batch_size: 16

num_epochs: 2

learning_rate: 0.0003

cutoff_len: 256

val_set_size: 2000

lora_r: 8

lora_alpha: 16

lora_dropout: 0.05

lora_target_modules: ['q_proj', 'v_proj']

train_on_inputs: True

group_by_length: False

wandb_project:

wandb_run_name:

wandb_watch:

wandb_log_model:

resume_from_checkpoint: False

prompt template: alpaca

Loading checkpoint shards: 100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 33/33 [00:10<00:00, 3.01it/s]

Found cached dataset json (/home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e)

100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 1/1 [00:00<00:00, 228.95it/s]

trainable params: 4194304 || all params: 6742609920 || trainable%: 0.06220594176090199

Loading cached split indices for dataset at /home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e/cache-d8c5d7ac95d53860.arrow and /home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e/cache-4a34b0c9feb19e72.arrow

{'loss': 2.2501, 'learning_rate': 2.6999999999999996e-05, 'epoch': 0.05}

...

{'loss': 0.8998, 'learning_rate': 0.000267, 'epoch': 0.46}

{'loss': 0.8959, 'learning_rate': 0.00029699999999999996, 'epoch': 0.51}

28%|███████████████████████████████████████████▎ | 109/390 [32:48<114, 17.77s/it]

顯存占用:

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 515.105.01 Driver Version: 515.105.01 CUDA Version: 11.7 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 NVIDIA A800 80G... Off | 0000000000.0 Off | 0 |

| N/A 71C P0 299W / 300W | 57431MiB / 81920MiB | 100% Default |

| | | Disabled |

+-------------------------------+----------------------+----------------------+

...

+-------------------------------+----------------------+----------------------+

| 7 NVIDIA A800 80G... Off | 0000000000.0 Off | 0 |

| N/A 33C P0 71W / 300W | 951MiB / 81920MiB | 0% Default |

| | | Disabled |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| 0 N/A N/A 55017 C python 57429MiB |

...

| 7 N/A N/A 55017 C python 949MiB |

+-----------------------------------------------------------------------------+

發現GPU的使用率上去了,訓練速度也提升了,但是沒有充分利用GPU資源,單卡訓練(epoch:3)大概3小時即可完成。

因此,為了進一步提升模型訓練速度,下面嘗試使用數據并行,在多卡上面進行訓練。

torchrun --nproc_per_node=8 --master_port=29005 finetune.py

--base_model '/data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b'

--data_path '/data/nfs/guodong.li/data/alpaca_data_cleaned.json'

--output_dir '/home/guodong.li/output/lora-alpaca'

--batch_size 256

--micro_batch_size 16

--num_epochs 2

運行過程:

torchrun --nproc_per_node=8 --master_port=29005 finetune.py

> --base_model '/data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b'

> --data_path '/data/nfs/guodong.li/data/alpaca_data_cleaned.json'

> --output_dir '/home/guodong.li/output/lora-alpaca'

> --batch_size 256

> --micro_batch_size 16

> --num_epochs 2

WARNING

*****************************************

Setting OMP_NUM_THREADS environment variable for each process to be 1 in default, to avoid your system being overloaded, please further tune the variable for optimal performance in your application as needed.

*****************************************

===================================BUG REPORT===================================

Welcome to bitsandbytes. For bug reports, please submit your error trace to: https://github.com/TimDettmers/bitsandbytes/issues

================================================================================

...

===================================BUG REPORT===================================

Welcome to bitsandbytes. For bug reports, please submit your error trace to: https://github.com/TimDettmers/bitsandbytes/issues

================================================================================

/home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/bitsandbytes-0.37.2-py3.10.egg/bitsandbytes/cuda_setup/main.py UserWarning: WARNING: The following directories listed in your path were found to be non-existent: {PosixPath('/opt/rh/devtoolset-9/root/usr/lib/dyninst'), PosixPath('/opt/rh/devtoolset-7/root/usr/lib/dyninst')}

warn(msg)

CUDA SETUP: CUDA runtime path found: /usr/local/cuda-11.7/lib64/libcudart.so

CUDA SETUP: Highest compute capability among GPUs detected: 8.0

CUDA SETUP: Detected CUDA version 117

CUDA SETUP: Loading binary /home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/bitsandbytes-0.37.2-py3.10.egg/bitsandbytes/libbitsandbytes_cuda117.so...

/home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/bitsandbytes-0.37.2-py3.10.egg/bitsandbytes/cuda_setup/main.py UserWarning: WARNING: The following directories listed in your path were found to be non-existent: {PosixPath('/opt/rh/devtoolset-7/root/usr/lib/dyninst'), PosixPath('/opt/rh/devtoolset-9/root/usr/lib/dyninst')}

...

Training Alpaca-LoRA model with params:

base_model: /data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b

data_path: /data/nfs/guodong.li/data/alpaca_data_cleaned.json

output_dir: /home/guodong.li/output/lora-alpaca

batch_size: 256

micro_batch_size: 16

num_epochs: 2

learning_rate: 0.0003

cutoff_len: 256

val_set_size: 2000

lora_r: 8

lora_alpha: 16

lora_dropout: 0.05

lora_target_modules: ['q_proj', 'v_proj']

train_on_inputs: True

group_by_length: False

wandb_project:

wandb_run_name:

wandb_watch:

wandb_log_model:

resume_from_checkpoint: False

prompt template: alpaca

Loading checkpoint shards: 100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 33/33 [00:14<00:00, 2.25it/s]

...

Loading checkpoint shards: 100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 33/33 [00:20<00:00, 1.64it/s]

Found cached dataset json (/home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e)

100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 1/1 [00:00<00:00, 129.11it/s]

trainable params: 4194304 || all params: 6742609920 || trainable%: 0.06220594176090199

Loading cached split indices for dataset at /home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e/cache-d8c5d7ac95d53860.arrow and /home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e/cache-4a34b0c9feb19e72.arrow

Map: 4%|██████▎ | 2231/49942 [00:01<00:37, 1256.31 examples/s]Found cached dataset json (/home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e)

100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 1/1 [00:00<00:00, 220.24it/s]

...

trainable params: 4194304 || all params: 6742609920 || trainable%: 0.06220594176090199

Loading cached split indices for dataset at /home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e/cache-d8c5d7ac95d53860.arrow and /home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e/cache-4a34b0c9feb19e72.arrow

Map: 2%|██▋ | 939/49942 [00:00<00:37, 1323.94 examples/s]Found cached dataset json (/home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e)

100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 1/1 [00:00<00:00, 362.77it/s]

trainable params: 4194304 || all params: 6742609920 || trainable%: 0.06220594176090199

Loading cached split indices for dataset at /home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e/cache-d8c5d7ac95d53860.arrow and /home/guodong.li/.cache/huggingface/datasets/json/default-2dab63d15cf49261/0.0.0/fe5dd6ea2639a6df622901539cb550cf8797e5a6b2dd7af1cf934bed8e233e6e/cache-4a34b0c9feb19e72.arrow

{'loss': 2.2798, 'learning_rate': 1.7999999999999997e-05, 'epoch': 0.05}

...

{'loss': 0.853, 'learning_rate': 0.0002006896551724138, 'epoch': 1.02}

{'eval_loss': 0.8590874075889587, 'eval_runtime': 10.5401, 'eval_samples_per_second': 189.752, 'eval_steps_per_second': 3.036, 'epoch': 1.02}

{'loss': 0.8656, 'learning_rate': 0.0001903448275862069, 'epoch': 1.07}

...

{'loss': 0.8462, 'learning_rate': 6.620689655172413e-05, 'epoch': 1.69}

{'loss': 0.8585, 'learning_rate': 4.137931034482758e-06, 'epoch': 1.99}

{'loss': 0.8549, 'learning_rate': 0.00011814432989690721, 'epoch': 2.05}

{'eval_loss': 0.8465630412101746, 'eval_runtime': 10.5273, 'eval_samples_per_second': 189.983, 'eval_steps_per_second': 3.04, 'epoch': 2.05}

{'loss': 0.8492, 'learning_rate': 0.00011195876288659793, 'epoch': 2.1}

...

{'loss': 0.8398, 'learning_rate': 1.2989690721649484e-05, 'epoch': 2.92}

{'loss': 0.8473, 'learning_rate': 6.804123711340206e-06, 'epoch': 2.97}

100%|█████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 585/585 [23:46<00:00, 2.38s/it]

{'train_runtime': 1426.9255, 'train_samples_per_second': 104.999, 'train_steps_per_second': 0.41, 'train_loss': 0.9613736364576552, 'epoch': 2.99}

100%|█████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 585/585 [23:46<00:00, 2.44s/it]

模型文件:

> tree /home/guodong.li/output/lora-alpaca

/home/guodong.li/output/lora-alpaca

├── adapter_config.json

├── adapter_model.bin

└── checkpoint-200

├── optimizer.pt

├── pytorch_model.bin

├── rng_state_0.pth

├── rng_state_1.pth

├── rng_state_2.pth

├── rng_state_3.pth

├── rng_state_4.pth

├── rng_state_5.pth

├── rng_state_6.pth

├── rng_state_7.pth

├── scaler.pt

├── scheduler.pt

├── trainer_state.json

└── training_args.bin

1 directory, 16 files

我們可以看到,在數據并行的情況下,如果epoch=3(本文epoch=2),訓練僅需要20分鐘左右即可完成。目前,tloen/Alpaca-LoRA-7b提供的最新“官方”的Alpaca-LoRA adapter于 3 月 26 日使用以下超參數進行訓練。

- Epochs: 10 (load from best epoch)

- Batch size: 128

- Cutoff length: 512

- Learning rate: 3e-4

- Lorar: 16

- Lora target modules: q_proj, k_proj, v_proj, o_proj

具體命令如下:

python finetune.py

--base_model='decapoda-research/llama-7b-hf'

--num_epochs=10

--cutoff_len=512

--group_by_length

--output_dir='./lora-alpaca'

--lora_target_modules='[q_proj,k_proj,v_proj,o_proj]'

--lora_r=16

--micro_batch_size=8

模型推理

運行命令如下:

python generate.py

--load_8bit

--base_model '/data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b'

--lora_weights '/home/guodong.li/output/lora-alpaca'

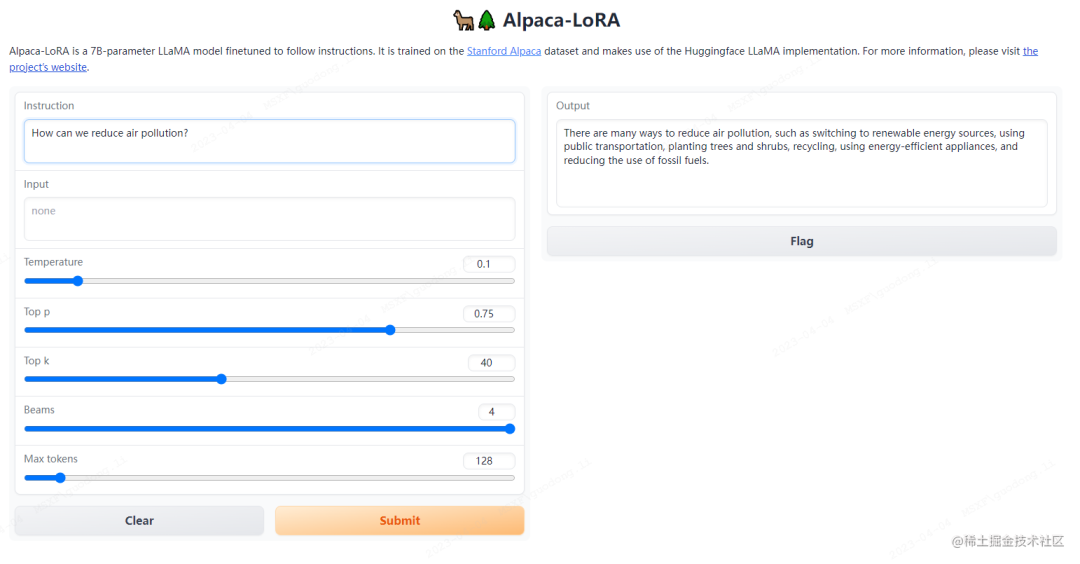

運行這個腳本會啟動一個gradio服務,你可以通過瀏覽器在網頁上進行測試。

運行過程如下所示:

python generate.py

> --load_8bit

> --base_model '/data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b'

> --lora_weights '/home/guodong.li/output/lora-alpaca'

===================================BUG REPORT===================================

Welcome to bitsandbytes. For bug reports, please submit your error trace to: https://github.com/TimDettmers/bitsandbytes/issues

================================================================================

/home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/bitsandbytes-0.37.2-py3.10.egg/bitsandbytes/cuda_setup/main.py UserWarning: WARNING: The following directories listed in your path were found to be non-existent: {PosixPath('/opt/rh/devtoolset-9/root/usr/lib/dyninst'), PosixPath('/opt/rh/devtoolset-7/root/usr/lib/dyninst')}

warn(msg)

CUDA SETUP: CUDA runtime path found: /usr/local/cuda-11.7/lib64/libcudart.so

CUDA SETUP: Highest compute capability among GPUs detected: 8.0

CUDA SETUP: Detected CUDA version 117

CUDA SETUP: Loading binary /home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/bitsandbytes-0.37.2-py3.10.egg/bitsandbytes/libbitsandbytes_cuda117.so...

Loading checkpoint shards: 100%|█████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 33/33 [00:12<00:00, 2.68it/s]

/home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/gradio/inputs.py UserWarning: Usage of gradio.inputs is deprecated, and will not be supported in the future, please import your component from gradio.components

warnings.warn(

/home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/gradio/deprecation.py UserWarning: `optional` parameter is deprecated, and it has no effect

warnings.warn(value)

/home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/gradio/deprecation.py UserWarning: `numeric` parameter is deprecated, and it has no effect

warnings.warn(value)

Running on local URL: http://0.0.0.0:7860

To create a public link, set `share=True` in `launch()`.

顯存占用:

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 515.105.01 Driver Version: 515.105.01 CUDA Version: 11.7 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 NVIDIA A800 80G... Off | 0000000000.0 Off | 0 |

| N/A 50C P0 81W / 300W | 8877MiB / 81920MiB | 0% Default |

| | | Disabled |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| 0 N/A N/A 7837 C python 8875MiB |

+-----------------------------------------------------------------------------+

打開瀏覽器輸入IP+端口進行測試。

image.png

image.png將 LoRA 權重合并回基礎模型

下面將 LoRA 權重合并回基礎模型以導出為 HuggingFace 格式和 PyTorch state_dicts。以幫助想要在 llama.cpp 或 alpaca.cpp 等項目中運行推理的用戶。

導出為 HuggingFace 格式:

修改export_hf_checkpoint.py文件:

import os

import torch

import transformers

from peft import PeftModel

from transformers import LlamaForCausalLM, LlamaTokenizer # noqa: F402

BASE_MODEL = os.environ.get("BASE_MODEL", None)

# TODO

LORA_MODEL = os.environ.get("LORA_MODEL", "tloen/alpaca-lora-7b")

HF_CHECKPOINT = os.environ.get("HF_CHECKPOINT", "./hf_ckpt")

assert (

BASE_MODEL

), "Please specify a value for BASE_MODEL environment variable, e.g. `export BASE_MODEL=decapoda-research/llama-7b-hf`" # noqa: E501

tokenizer = LlamaTokenizer.from_pretrained(BASE_MODEL)

base_model = LlamaForCausalLM.from_pretrained(

BASE_MODEL,

load_in_8bit=False,

torch_dtype=torch.float16,

device_map={"": "cpu"},

)

first_weight = base_model.model.layers[0].self_attn.q_proj.weight

first_weight_old = first_weight.clone()

lora_model = PeftModel.from_pretrained(

base_model,

# TODO

# "tloen/alpaca-lora-7b",

LORA_MODEL,

device_map={"": "cpu"},

torch_dtype=torch.float16,

)

...

# TODO

LlamaForCausalLM.save_pretrained(

base_model, HF_CHECKPOINT , state_dict=deloreanized_sd, max_shard_size="400MB"

)

運行命令:

BASE_MODEL=/data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b

LORA_MODEL=/home/guodong.li/output/lora-alpaca

HF_CHECKPOINT=/home/guodong.li/output/hf_ckpt

python export_hf_checkpoint.py

運行過程:

BASE_MODEL=/data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b

> LORA_MODEL=/home/guodong.li/output/lora-alpaca

> HF_CHECKPOINT=/home/guodong.li/output/hf_ckpt

> python export_hf_checkpoint.py

===================================BUG REPORT===================================

Welcome to bitsandbytes. For bug reports, please submit your error trace to: https://github.com/TimDettmers/bitsandbytes/issues

================================================================================

/home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/bitsandbytes-0.37.2-py3.10.egg/bitsandbytes/cuda_setup/main.py UserWarning: WARNING: The following directories listed in your path were found to be non-existent: {PosixPath('/opt/rh/devtoolset-7/root/usr/lib/dyninst'), PosixPath('/opt/rh/devtoolset-9/root/usr/lib/dyninst')}

warn(msg)

CUDA SETUP: CUDA runtime path found: /usr/local/cuda-11.7/lib64/libcudart.so

CUDA SETUP: Highest compute capability among GPUs detected: 8.0

CUDA SETUP: Detected CUDA version 117

CUDA SETUP: Loading binary /home/guodong.li/virtual-venv/alpara-lora-venv-py310-cu117/lib/python3.10/site-packages/bitsandbytes-0.37.2-py3.10.egg/bitsandbytes/libbitsandbytes_cuda117.so...

Loading checkpoint shards: 100%|█████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 33/33 [00:05<00:00, 5.99it/s]

查看模型輸出文件:

> tree /home/guodong.li/output/hf_ckpt

/home/guodong.li/output/hf_ckpt

├── config.json

├── generation_config.json

├── pytorch_model-00001-of-00039.bin

├── pytorch_model-00002-of-00039.bin

...

├── pytorch_model-00038-of-00039.bin

├── pytorch_model-00039-of-00039.bin

└── pytorch_model.bin.index.json

0 directories, 42 files

導出為PyTorch state_dicts:

修改export_state_dict_checkpoint.py文件:

import json

import os

import torch

import transformers

from peft import PeftModel

from transformers import LlamaForCausalLM, LlamaTokenizer # noqa: E402

BASE_MODEL = os.environ.get("BASE_MODEL", None)

LORA_MODEL = os.environ.get("LORA_MODEL", "tloen/alpaca-lora-7b")

PTH_CHECKPOINT_PREFIX = os.environ.get("PTH_CHECKPOINT_PREFIX", "./ckpt")

assert (

BASE_MODEL

), "Please specify a value for BASE_MODEL environment variable, e.g. `export BASE_MODEL=decapoda-research/llama-7b-hf`" # noqa: E501

tokenizer = LlamaTokenizer.from_pretrained(BASE_MODEL)

base_model = LlamaForCausalLM.from_pretrained(

BASE_MODEL,

load_in_8bit=False,

torch_dtype=torch.float16,

device_map={"": "cpu"},

)

lora_model = PeftModel.from_pretrained(

base_model,

# todo

#"tloen/alpaca-lora-7b",

LORA_MODEL,

device_map={"": "cpu"},

torch_dtype=torch.float16,

)

...

os.makedirs(PTH_CHECKPOINT_PREFIX, exist_ok=True)

torch.save(new_state_dict, PTH_CHECKPOINT_PREFIX+"/consolidated.00.pth")

with open(PTH_CHECKPOINT_PREFIX+"/params.json", "w") as f:

json.dump(params, f)

運行命令:

BASE_MODEL=/data/nfs/guodong.li/pretrain/hf-llama-model/llama-7b

LORA_MODEL=/home/guodong.li/output/lora-alpaca

PTH_CHECKPOINT_PREFIX=/home/guodong.li/output/ckpt

python export_state_dict_checkpoint.py

查看模型輸出文件:

tree /home/guodong.li/output/ckpt

/home/guodong.li/output/ckpt

├── consolidated.00.pth

└── params.json

當然,你還可以封裝為Docker鏡像來對訓練和推理環境進行隔離。

封裝為Docker鏡像并進行推理

- 構建Docker鏡像:

docker build -t alpaca-lora .

-

運行Docker容器進行推理 (您還可以使用

finetune.py及其上面提供的所有超參數進行訓練):

docker run --gpus=all --shm-size 64g -p 7860:7860 -v ${HOME}/.cache:/root/.cache --rm alpaca-lora generate.py

--load_8bit

--base_model 'decapoda-research/llama-7b-hf'

--lora_weights 'tloen/alpaca-lora-7b'

-

打開瀏覽器,輸入URL:

https://localhost:7860進行測試。

結語

從上面可以看到,在一臺8卡的A800服務器上面,基于Alpaca-Lora針對alpaca_data_cleaned.json指令數據大概20分鐘左右即可完成參數高效微調,相對于斯坦福羊駝訓練速度顯著提升。

參考文檔:

- LLaMA

- Stanford Alpaca:斯坦福-羊駝

- Alpaca-LoRA

審核編輯 :李倩

-

服務器

+關注

關注

12文章

9138瀏覽量

85369 -

模型

+關注

關注

1文章

3233瀏覽量

48820 -

語言模型

+關注

關注

0文章

522瀏覽量

10271

原文標題:足夠驚艷,使用Alpaca-Lora基于LLaMA(7B)二十分鐘完成微調,效果比肩斯坦福羊駝

文章出處:【微信號:zenRRan,微信公眾號:深度學習自然語言處理】歡迎添加關注!文章轉載請注明出處。

發布評論請先 登錄

相關推薦

有哪些省內存的大語言模型訓練/微調/推理方法?

使用LoRA和Hugging Face高效訓練大語言模型

如何訓練自己的ChatGPT

關于LLM+LoRa微調加速技術原理

調教LLaMA類模型沒那么難,LoRA將模型微調縮減到幾小時

iPhone都能微調大模型了嘛

大模型為什么要微調?大模型微調的原理

chatglm2-6b在P40上做LORA微調

一種信息引導的量化后LLM微調新算法IR-QLoRA

使用Alpaca-Lora進行參數高效模型微調

使用Alpaca-Lora進行參數高效模型微調

評論