最近幾個月,隨著ChatGPT的現(xiàn)象級表現(xiàn),大模型如雨后春筍般涌現(xiàn)。而模型推理是抽象的算法模型觸達(dá)具體的實(shí)際業(yè)務(wù)的最后一公里。

但是在這個環(huán)節(jié)中,仍然還有很多已經(jīng)是大家共識的痛點(diǎn)和訴求,比如:

任何線上產(chǎn)品的用戶體驗(yàn)都與服務(wù)的響應(yīng)時長成反比,復(fù)雜的模型如何極致地壓縮請求時延?

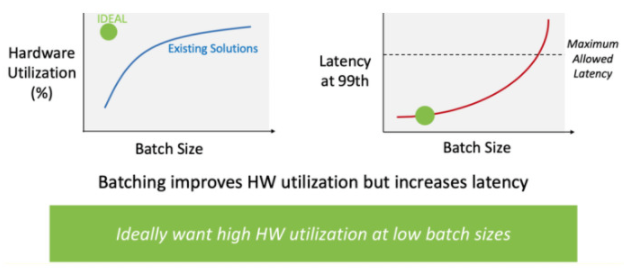

模型推理通常是資源常駐型服務(wù),如何通過提升服務(wù)單機(jī)性能從而增加QPS,同時大幅降低資源成本?

端-邊-云是現(xiàn)在模型服務(wù)發(fā)展的必然趨勢,如何讓離線訓(xùn)練的模型“瘦身塑形”從而在更多設(shè)備上快速部署使用?

因此,模型推理的加速優(yōu)化成為了AI界的重要研究領(lǐng)域。

本文給大家分享大模型推理加速引擎FasterTransformer的基本使用。

image.png

image.png

FasterTransformer簡介

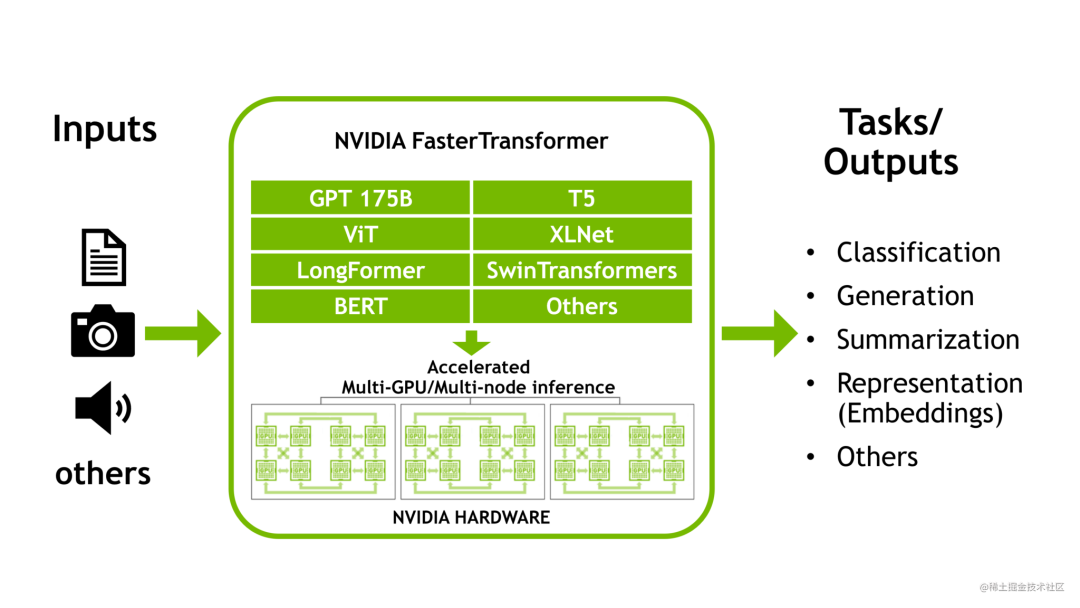

NVIDIA FasterTransformer (FT)是一個用于實(shí)現(xiàn)基于Transformer的神經(jīng)網(wǎng)絡(luò)推理的加速引擎。它包含Transformer塊的高度優(yōu)化版本的實(shí)現(xiàn),其中包含編碼器和解碼器部分。使用此模塊,您可以運(yùn)行編碼器-解碼器架構(gòu)模型(如:T5)、僅編碼器架構(gòu)模型(如:BERT)和僅解碼器架構(gòu)模型(如:GPT)的推理。

FT框架是用C++/CUDA編寫的,依賴于高度優(yōu)化的 cuBLAS、cuBLASLt 和 cuSPARSELt 庫,這使您可以在 GPU 上進(jìn)行快速的 Transformer 推理。

與NVIDIA TensorRT等其他編譯器相比,F(xiàn)T 的最大特點(diǎn)是它支持以分布式方式進(jìn)行 Transformer 大模型推理。

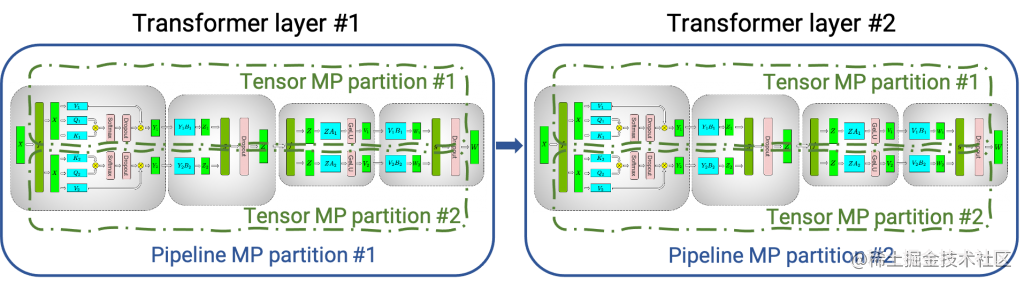

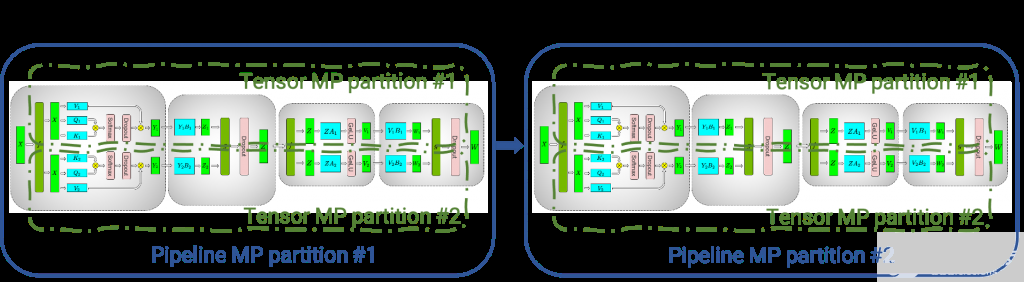

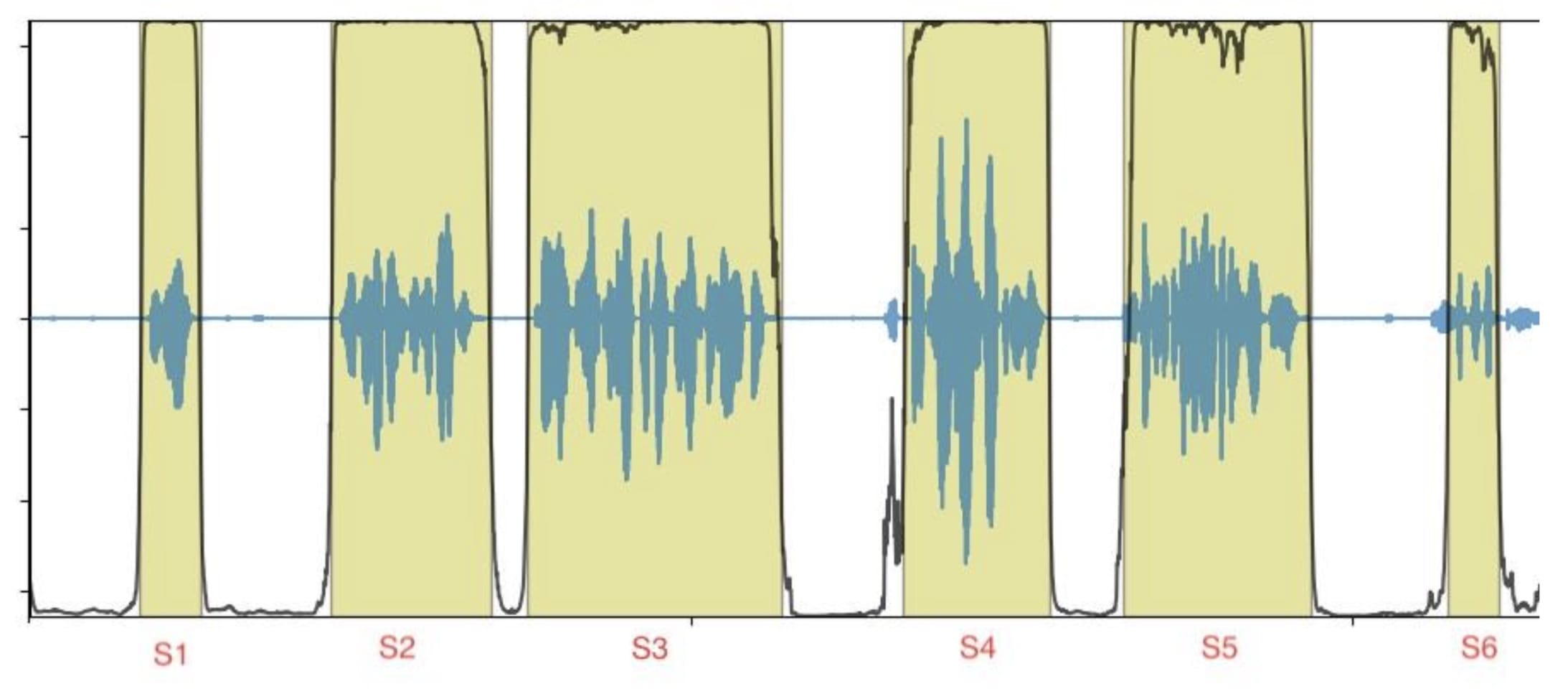

下圖顯示了如何使用張量并行 (TP) 和流水線并行 (PP) 技術(shù)將基于Transformer架構(gòu)的神經(jīng)網(wǎng)絡(luò)拆分到多個 GPU 和節(jié)點(diǎn)上。

當(dāng)每個張量被分成多個塊時,就會發(fā)生張量并行,并且張量的每個塊都可以放置在單獨(dú)的 GPU 上。在計(jì)算過程中,每個塊在不同的 GPU 上單獨(dú)并行處理;最后,可以通過組合來自多個 GPU 的結(jié)果來計(jì)算最終張量。

當(dāng)模型被深度拆分,并將不同的完整層放置到不同的 GPU/節(jié)點(diǎn)上時,就會發(fā)生流水線并行。

在底層,節(jié)點(diǎn)間或節(jié)點(diǎn)內(nèi)通信依賴于 MPI 、 NVIDIA NCCL、Gloo等。因此,使用FasterTransformer,您可以在多個 GPU 上以張量并行運(yùn)行大型Transformer,以減少計(jì)算延遲。同時,TP 和 PP 可以結(jié)合在一起,在多 GPU 節(jié)點(diǎn)環(huán)境中運(yùn)行具有數(shù)十億、數(shù)萬億個參數(shù)的大型 Transformer 模型。

除了使用 C ++ 作為后端部署,F(xiàn)asterTransformer 還集成了 TensorFlow(使用 TensorFlow op)、PyTorch (使用 Pytorch op)和 Triton作為后端框架進(jìn)行部署。當(dāng)前,TensorFlow op 僅支持單 GPU,而 PyTorch op 和 Triton 后端都支持多 GPU 和多節(jié)點(diǎn)。

目前,F(xiàn)T 支持了 Megatron-LM GPT-3、GPT-J、BERT、ViT、Swin Transformer、Longformer、T5 和 XLNet 等模型。您可以在 GitHub 上的 FasterTransformer庫中查看最新的支持矩陣。

FT 適用于計(jì)算能力 >= 7.0 的 GPU,例如 V100、A10、A100 等。

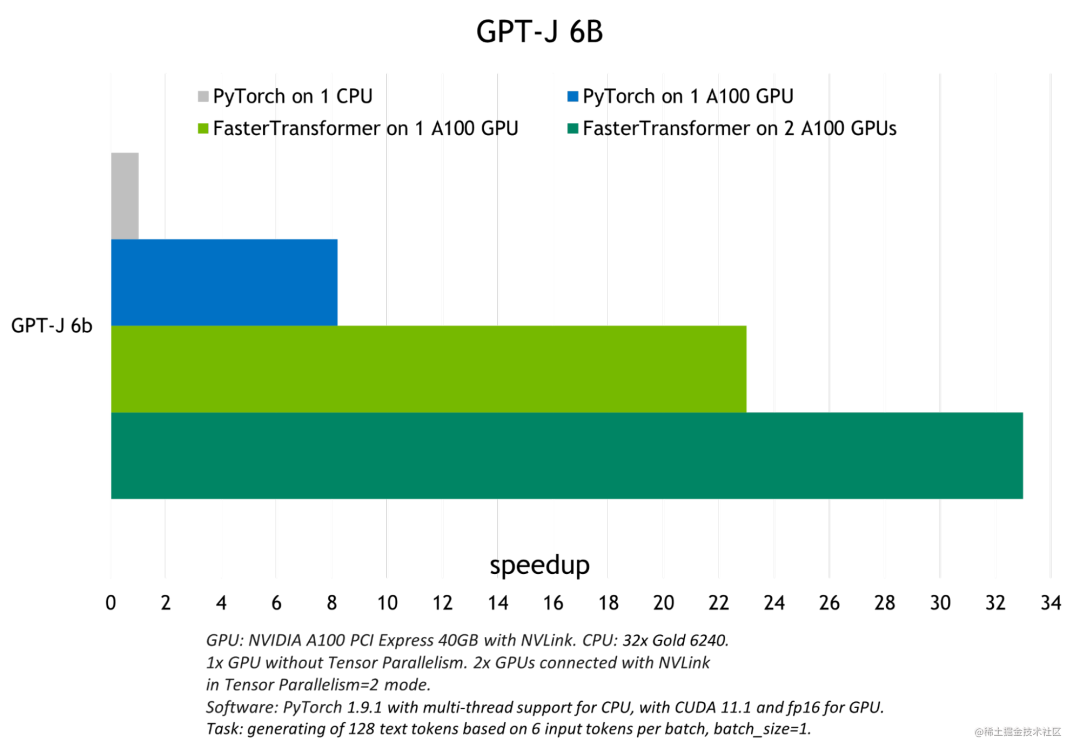

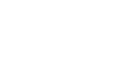

下圖展示了 GPT-J 6B 參數(shù)的模型推斷加速比較:

image.png

image.png

FasterTransformer 中的優(yōu)化技術(shù)

與深度學(xué)習(xí)訓(xùn)練的通用框架相比,F(xiàn)T 使您能夠獲得更快的推理流水線以及基于 Transformer 的神經(jīng)網(wǎng)絡(luò)具有更低的延遲和更高的吞吐量。FT 對 GPT-3 和其他大型Transformer模型進(jìn)行的一些優(yōu)化技術(shù)包括:

層融合(Layer fusion)

這是預(yù)處理階段的一組技術(shù),將多層神經(jīng)網(wǎng)絡(luò)組合成一個單一的神經(jīng)網(wǎng)絡(luò),將使用一個單一的核(kernel)進(jìn)行計(jì)算。這種技術(shù)減少了數(shù)據(jù)傳輸并增加了數(shù)學(xué)密度,從而加速了推理階段的計(jì)算。例如, multi-head attention 塊中的所有操作都可以合并到一個核(kernel)中。

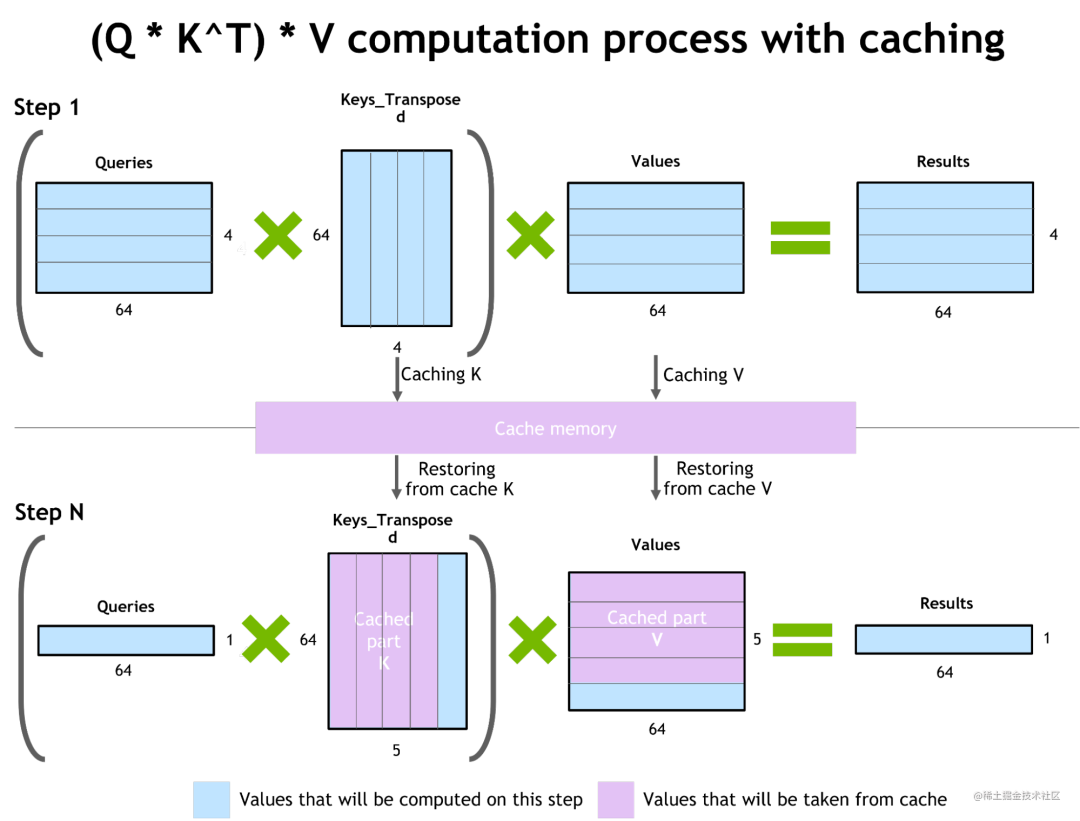

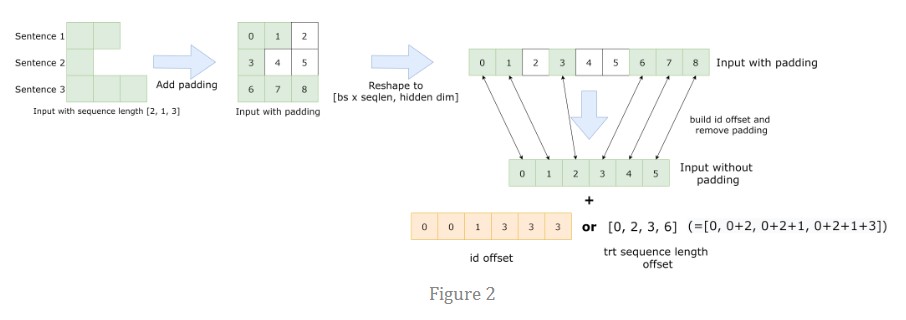

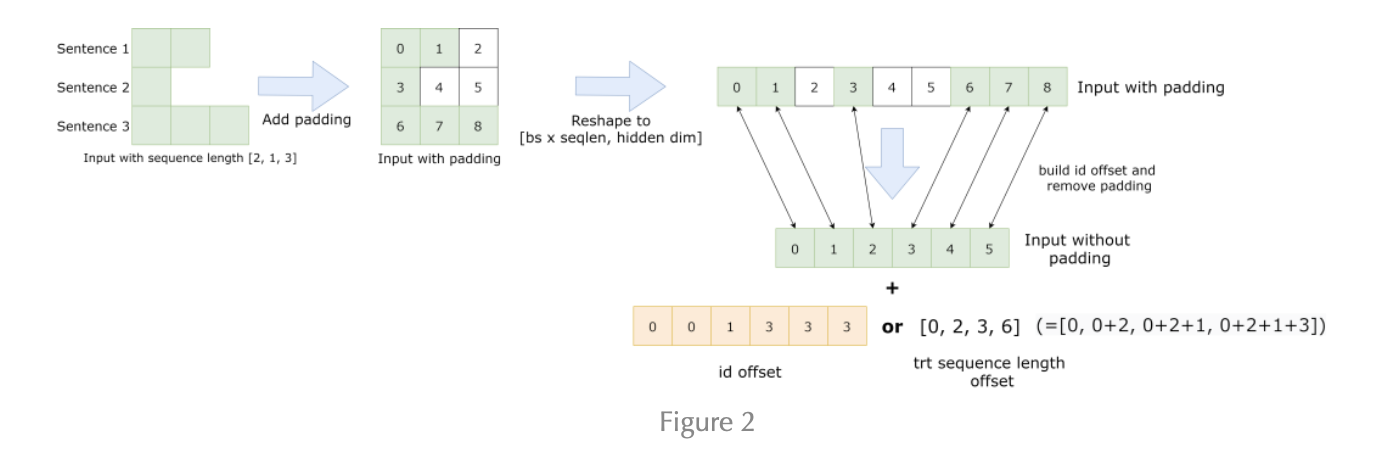

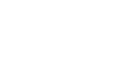

自回歸模型的推理優(yōu)化(激活緩存)

為了防止通過Transformer重新計(jì)算每個新 token 生成器的先前鍵和值,F(xiàn)T 分配了一個緩沖區(qū)來在每一步存儲它們。

雖然需要一些額外的內(nèi)存使用,但 FT 可以節(jié)省重新計(jì)算的成本。該過程如下圖所示。相同的緩存機(jī)制用于 NN 的多個部分。

image.png

image.png

內(nèi)存優(yōu)化

與 BERT 等傳統(tǒng)模型不同,大型 Transformer 模型具有多達(dá)數(shù)萬億個參數(shù),占用數(shù)百 GB 存儲空間。即使我們以半精度存儲模型,GPT-3 175b 也需要 350 GB。因此有必要減少其他部分的內(nèi)存使用。

例如,在 FasterTransformer 中,我們在不同的解碼器層重用了激活/輸出的內(nèi)存緩沖(buffer)。由于 GPT-3 中的層數(shù)為 96,因此我們只需要 1/96 的內(nèi)存量用于激活。

使用 MPI 和 NCCL 實(shí)現(xiàn)節(jié)點(diǎn)間/節(jié)點(diǎn)內(nèi)通信并支持模型并行

FasterTransormer 同時提供張量并行和流水線并行。對于張量并行,F(xiàn)asterTransformer 遵循了 Megatron 的思想。對于自注意力塊和前饋網(wǎng)絡(luò)塊,F(xiàn)T 按行拆分第一個矩陣的權(quán)重,并按列拆分第二個矩陣的權(quán)重。通過優(yōu)化,F(xiàn)T 可以將每個 Transformer 塊的歸約(reduction)操作減少到兩次。

對于流水線并行,F(xiàn)asterTransformer 將整批請求拆分為多個微批,隱藏了通信的空泡(bubble)。FasterTransformer 會針對不同情況自動調(diào)整微批量大小。

MatMul 核自動調(diào)整(GEMM 自動調(diào)整)

矩陣乘法是基于Transformer的神經(jīng)網(wǎng)絡(luò)中最主要和繁重的操作。FT 使用來自 CuBLAS 和 CuTLASS 庫的功能來執(zhí)行這些類型的操作。重要的是要知道 MatMul 操作可以在“硬件”級別使用不同的底層(low-level)算法以數(shù)十種不同的方式執(zhí)行。

GemmBatchedEx函數(shù)實(shí)現(xiàn)了 MatMul 操作,并以cublasGemmAlgo_t作為輸入?yún)?shù)。使用此參數(shù),您可以選擇不同的底層算法進(jìn)行操作。

FasterTransformer 庫使用此參數(shù)對所有底層算法進(jìn)行實(shí)時基準(zhǔn)測試,并為模型的參數(shù)和您的輸入數(shù)據(jù)(注意層的大小、注意頭的數(shù)量、隱藏層的大小)選擇最佳的一個。此外,F(xiàn)T 對網(wǎng)絡(luò)的某些部分使用硬件加速的底層函數(shù),例如:__expf、__shfl_xor_sync。

低精度推理

FT 的核(kernels)支持使用 fp16 和 int8 等低精度輸入數(shù)據(jù)進(jìn)行推理。由于較少的數(shù)據(jù)傳輸量和所需的內(nèi)存,這兩種機(jī)制都會加速。同時,int8 和 fp16 計(jì)算可以在特殊硬件上執(zhí)行,例如:Tensor Core(適用于從 Volta 開始的所有 GPU 架構(gòu))和即將推出的 Hopper GPU 中的Transformer引擎。

除此之外還有快速的 C++ BeamSearch 實(shí)現(xiàn)、當(dāng)模型的權(quán)重部分分配到八個 GPU 之間時,針對 TensorParallelism 8 模式優(yōu)化的 all-reduce。

上面簡述了FasterTransformer,下面將演示針對 Bloom 模型以 PyTorch 作為后端使用FasterTransformer。

FasterTransformer GPT 簡介

下文將會使用BLOOM模型進(jìn)行演示,而 BLOOM 是一個利用 ALiBi(用于添加位置嵌入) 的 GPT 模型的變體,因此,本文先簡要介紹一下 GPT 的相關(guān)工作。GPT是僅解碼器架構(gòu)模型的一種變體,沒有編碼器模塊,使用GeLU作為激活。

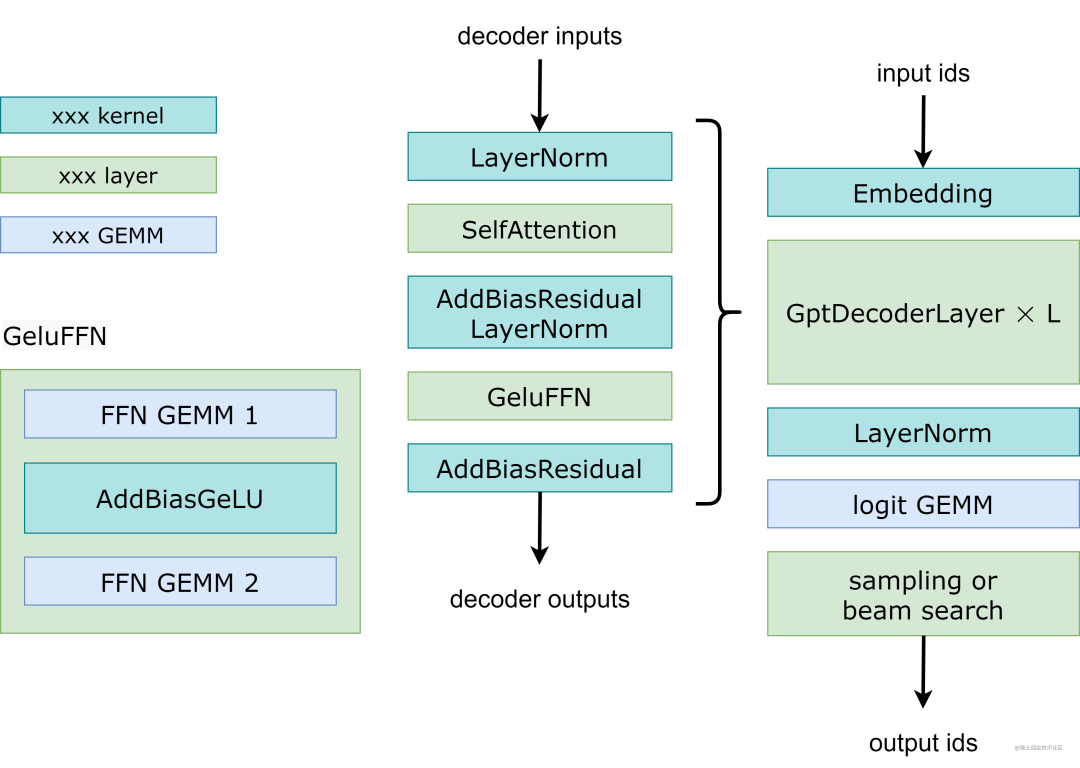

FasterTransformer GPT 工作流程

下圖展示了 FasterTransformer GPT 的工作流程。與 BERT(僅編碼器結(jié)構(gòu)) 和編碼器-解碼器結(jié)構(gòu)不同,GPT 接收一些輸入 id 作為上下文,并生成相應(yīng)的輸出 id 作為響應(yīng)。在此工作流程中,主要瓶頸是 GptDecoderLayer(transformer塊),因?yàn)楫?dāng)我們增加層數(shù)時,時間會線性增加。在 GPT-3 中,GptDecoderLayer 占用了大約 95% 的總時間。

image.png

image.png

FasterTransformer 將整個工作流程分成兩部分。

第一部分是“計(jì)算上下文(輸入 ids)的 k/v 緩存”。

第二部分是“自回歸生成輸出 ids”。

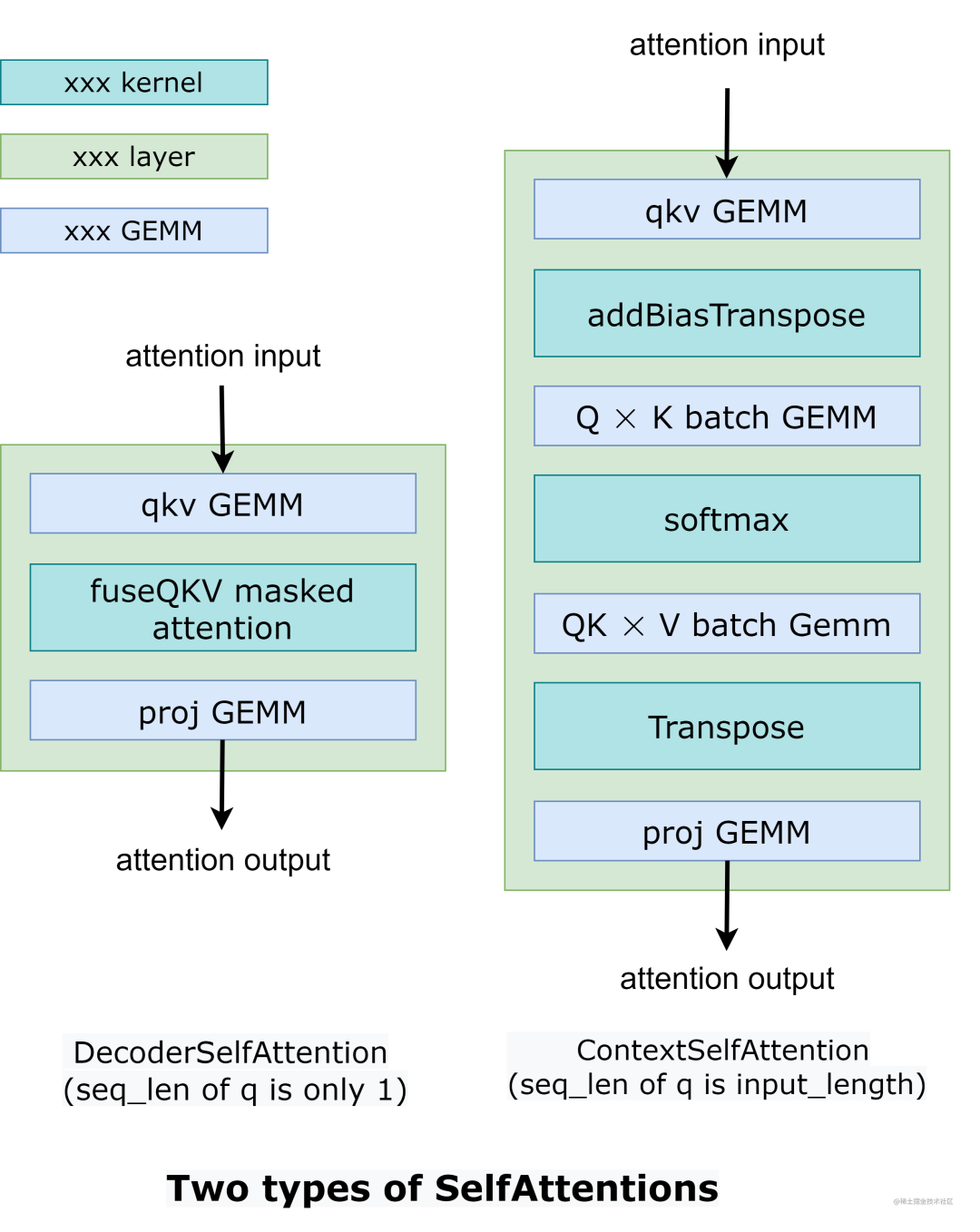

這兩部分的操作類似,只是SelfAttention中張量的形狀不同。因此,我們使用 2 種不同的實(shí)現(xiàn)來處理兩種不同的情況,如下圖所示。

image.png

image.png

在 DecoderSelfAttention 中,查詢的序列長度始終為 1,因此我們使用自定義的 fused masked multi-head attention kernel 來處理。另一方面,ContextSelfAttention 中查詢的序列長度是最大輸入長度,因此我們使用 cuBLAS 來利用tensor core。

以下的示例演示了如何運(yùn)行多 GPU 和多節(jié)點(diǎn)的 GPT 模型。

examples/cpp/multi_gpu_gpt_example.cc:它使用MPI來組織所有的GPU。

examples/cpp/multi_gpu_gpt_triton_example.cc:它在節(jié)點(diǎn)內(nèi)使用線程,在節(jié)點(diǎn)間使用 MPI。此示例還演示了如何使用基于 FasterTransformer 的 Triton 后端 API 來運(yùn)行 GPT 模型。

examples/pytorch/gpt/multi_gpu_gpt_example.py:這個例子和examples/cpp/multi_gpu_gpt_example.cc類似,但是通過PyTorch OP封裝了FasterTransformer的實(shí)例。

總之,運(yùn)行 GPT 模型的工作流程是:

通過 MPI 或線程初始化 NCCL 通信并設(shè)置張量并行和流水線并行的ranks

按張量并行、流水線并行和其他模型超參數(shù)的ranks加載權(quán)重。

通過張量并行、流水線并行和其他模型超參數(shù)的ranks創(chuàng)建ParalelGpt實(shí)例。

接收來自客戶端的請求并將請求轉(zhuǎn)換為 ParallelGpt 的輸入張量格式。

運(yùn)行forward

將 ParallelGpt 的輸出張量轉(zhuǎn)換為客戶端的響應(yīng)并返回響應(yīng)。

在C++示例代碼中,我們跳過第4步和第6步,通過examples/cpp/multi_gpu_gpt/start_ids.csv加載該請求。在 PyTorch 示例代碼中,該請求來自 PyTorch 端。在 Triton 示例代碼中,我們有從步驟 1 到步驟 6 的完整示例。

源代碼放在 src/fastertransformer/models/multi_gpu_gpt/ParallelGpt.cc 中。其中,GPT的構(gòu)造函數(shù)參數(shù)包括head_num、num_layer、tensor_para、pipeline_para等,GPT的輸入?yún)?shù)包括input_ids、input_lengths、output_seq_len等;GPT的輸出參數(shù)包括output_ids(包含 input_ids 和生成的 id)、sequence_length、output_log_probs、cum_log_probs、context_embeddings。

FasterTransformer GPT 優(yōu)化

核優(yōu)化:很多核都是基于已經(jīng)高度優(yōu)化的解碼器和解碼碼模塊的核。為了防止重新計(jì)算以前的鍵和值,我們將在每一步分配一個緩沖區(qū)來存儲它們。雖然它需要一些額外的內(nèi)存使用,但我們可以節(jié)省重新計(jì)算的成本。

內(nèi)存優(yōu)化:與 BERT 等傳統(tǒng)模型不同,GPT-3 有 1750 億個參數(shù),即使我們以半精度存儲模型也需要 350 GB。因此,我們必須減少其他部分的內(nèi)存使用。在 FasterTransformer 中,我們將重用不同解碼器層的內(nèi)存緩沖。由于 GPT-3 的層數(shù)是 96,我們只需要 1/96 的內(nèi)存。

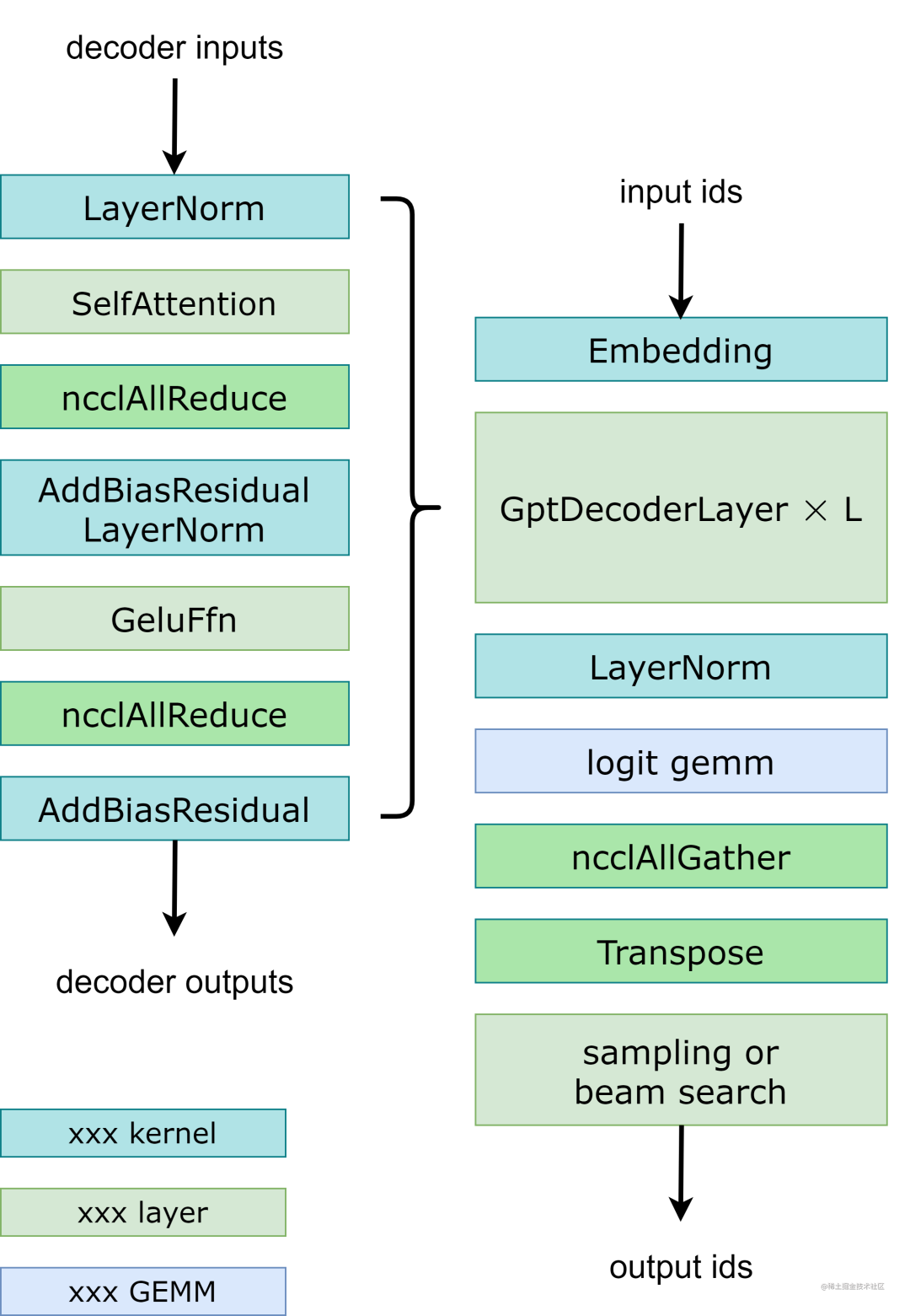

模型并行:在GPT模型中,F(xiàn)asterTransormer同時提供張量并行和流水線并行。對于張量并行,F(xiàn)asterTransformer 遵循了 Megatron 的思想。對于自注意力塊和前饋網(wǎng)絡(luò)塊,我們按行拆分第一個矩陣乘法的權(quán)重,按列拆分第二個矩陣乘法的權(quán)重。通過優(yōu)化,我們可以將每個transformer block的歸約操作減少到 2 次,工作流程如下圖所示。

對于流水線并行,F(xiàn)asterTransformer 將整批請求拆分為多個微批并隱藏通信空泡。FasterTransformer 會針對不同情況自動調(diào)整微批量大小。用戶可以通過修改 gpt_config.ini 文件來調(diào)整模型并行度。我們建議在節(jié)點(diǎn)內(nèi)使用張量并行,在節(jié)點(diǎn)間使用流水線并行,因?yàn)椋瑥埩坎⑿行枰嗟?NCCL 通信。

多框架:FasterTransformer除了c上的源代碼,還提供了TensorFlow op、PyTorch op和Triton backend。目前TensorFlow op只支持單GPU,而PyTorch op和Triton backend支持多GPU和多節(jié)點(diǎn)。FasterTransformer 還提供了一個工具,可以將 Megatron 的模型拆分并轉(zhuǎn)換為FasterTransformer二進(jìn)制文件,以便 FasterTransformer 可以直接加載二進(jìn)制文件,從而避免為模型并行而進(jìn)行的額外拆分模型工作。

FasterTransformer GPT 推理選項(xiàng)

FasterTransformer GPT 還提供環(huán)境變量以針對特定用途進(jìn)行調(diào)整。

| 名稱 | 描述 | 默認(rèn)值 | 可接受的值 |

|---|---|---|---|

| FMHA_ENABLE | 啟用融合多頭注意力核 (fp16 accumulation) | disabled | ON= enable fmha, otherwise disabled |

| CONTEXT_ATTENTION_BMM1_HALF_ACCUM | 對 qk gemm 使用 fp16 累加,并且只對未融合的多頭注意力核產(chǎn)生影響 | fp32 accumulation | ON= fp32 accumulation, otherwise fp16 accumulation |

環(huán)境搭建

基礎(chǔ)環(huán)境配置

首先確保您具有以下組件:

NVIDIA Docker 和 NGC 容器

NVIDIA Pascal/Volta/Turing/Ampere 系列的 GPU

基礎(chǔ)組件版本要求:

CMake: 3.13及以上版本

CUDA: 11.0及以上版本

NCCL: 2.10及以上版本

Python: 3.8.13

PyTorch: 1.13.0

這些組件在 Nvidia 官方提供的 TensorFlow/PyTorch Docker 鏡像中很容易獲得。

構(gòu)建FasterTransformer

推薦使用Nvidia官方提供的鏡像,如:nvcr.io/nvidia/tensorflow:22.09-tf1-py3 、 nvcr.io/nvidia/pytorch:22.09-py3等,當(dāng)然也可以使用Pytorch官方提供的鏡像。

首先,拉取相應(yīng)版本的PyTorch鏡像。

docker pull nvcr.io/nvidia/pytorch:22.09-py3

鏡像下載完成之后,創(chuàng)建容器,以便后續(xù)進(jìn)行編譯和構(gòu)建FasterTransformer。

nvidia-docker run -dti --name bloom_faster_transformer --restart=always --gpus all --network=host --shm-size 5g -v /home/gdong/workspace/code:/workspace/code -v /home/gdong/workspace/data:/workspace/data -v /home/gdong/workspace/model:/workspace/model -v /home/gdong/workspace/output:/workspace/output -w /workspace nvcr.io/nvidia/pytorch:22.09-py3 bash

進(jìn)入容器。

docker exec -it bloom_faster_transformer bash

下載FasterTransformer代碼。

cd code git clone https://github.com/NVIDIA/FasterTransformer.git cd FasterTransformer/ git submodule init && git submodule update

進(jìn)入build構(gòu)建FasterTransformer。

mkdir -p build cd build

然后,執(zhí)行cmake PATH命令生成 Makefile 文件。

cmake -DSM=80 -DCMAKE_BUILD_TYPE=Release -DBUILD_PYT=ON -DBUILD_MULTI_GPU=ON ..

注意:

第一點(diǎn):腳本中-DMS=xx的xx表示GPU的計(jì)算能力。下表顯示了常見GPU的計(jì)算能力。

| GPU | 計(jì)算能力 |

|---|---|

| P40 | 60 |

| P4 | 61 |

| V100 | 70 |

| T4 | 75 |

| A100 | 80 |

| A30 | 80 |

| A10 | 86 |

默認(rèn)情況下,-DSM 設(shè)置為 70、75、80 和 86。當(dāng)用戶設(shè)置更多類型的 -DSM 時,需要更長的編譯時間。因此,我們建議只為您使用的設(shè)備設(shè)置 -DSM。

第二點(diǎn):本文使用Pytorch作為后端,因此,腳本中添加了-DBUILD_PYT=ON配置項(xiàng)。這將構(gòu)建 TorchScript 自定義類。因此,請確保 PyTorch 版本大于 1.5.0。

運(yùn)行過程:

-- The CXX compiler identification is GNU 9.4.0 -- The CUDA compiler identification is NVIDIA 11.8.89 -- Detecting CXX compiler ABI info -- Detecting CXX compiler ABI info - done -- Check for working CXX compiler: /usr/bin/c++ - skipped -- Detecting CXX compile features -- Detecting CXX compile features - done -- Detecting CUDA compiler ABI info -- Detecting CUDA compiler ABI info - done -- Check for working CUDA compiler: /usr/local/cuda/bin/nvcc - skipped -- Detecting CUDA compile features -- Detecting CUDA compile features - done -- Looking for C++ include pthread.h -- Looking for C++ include pthread.h - found -- Performing Test CMAKE_HAVE_LIBC_PTHREAD -- Performing Test CMAKE_HAVE_LIBC_PTHREAD - Failed -- Looking for pthread_create in pthreads -- Looking for pthread_create in pthreads - not found -- Looking for pthread_create in pthread -- Looking for pthread_create in pthread - found -- Found Threads: TRUE -- Found CUDA: /usr/local/cuda (found suitable version "11.8", minimum required is "10.2") CUDA_VERSION 11.8 is greater or equal than 11.0, enable -DENABLE_BF16 flag -- Found CUDNN: /usr/lib/x86_64-linux-gnu/libcudnn.so -- Add DBUILD_CUTLASS_MOE, requires CUTLASS. Increases compilation time -- Add DBUILD_CUTLASS_MIXED_GEMM, requires CUTLASS. Increases compilation time -- Running submodule update to fetch cutlass -- Add DBUILD_MULTI_GPU, requires MPI and NCCL -- Found MPI_CXX: /opt/hpcx/ompi/lib/libmpi.so (found version "3.1") -- Found MPI: TRUE (found version "3.1") -- Found NCCL: /usr/include -- Determining NCCL version from /usr/include/nccl.h... -- Looking for NCCL_VERSION_CODE -- Looking for NCCL_VERSION_CODE - not found -- Found NCCL (include: /usr/include, library: /usr/lib/x86_64-linux-gnu/libnccl.so.2.15.1) -- NVTX is enabled. -- Assign GPU architecture (sm=80) -- Use WMMA CMAKE_CUDA_FLAGS_RELEASE: -O3 -DNDEBUG -Xcompiler -O3 -DCUDA_PTX_FP8_F2FP_ENABLED --use_fast_math -- COMMON_HEADER_DIRS: /workspace/code/FasterTransformer;/usr/local/cuda/include;/workspace/code/FasterTransformer/3rdparty/cutlass/include;/workspace/code/FasterTransformer/src/fastertransformer/cutlass_extensions/include;/workspace/code/FasterTransformer/3rdparty/trt_fp8_fmha/src;/workspace/code/FasterTransformer/3rdparty/trt_fp8_fmha/generated -- Found CUDA: /usr/local/cuda (found version "11.8") -- Caffe2: CUDA detected: 11.8 -- Caffe2: CUDA nvcc is: /usr/local/cuda/bin/nvcc -- Caffe2: CUDA toolkit directory: /usr/local/cuda -- Caffe2: Header version is: 11.8 -- Found cuDNN: v8.6.0 (include: /usr/include, library: /usr/lib/x86_64-linux-gnu/libcudnn.so) -- /usr/local/cuda/lib64/libnvrtc.so shorthash is 672ee683 -- Added CUDA NVCC flags for: -gencode;arch=compute_80,code=sm_80 -- Found Torch: /opt/conda/lib/python3.8/site-packages/torch/lib/libtorch.so -- USE_CXX11_ABI=True -- The C compiler identification is GNU 9.4.0 -- Detecting C compiler ABI info -- Detecting C compiler ABI info - done -- Check for working C compiler: /usr/bin/cc - skipped -- Detecting C compile features -- Detecting C compile features - done -- Found Python: /opt/conda/bin/python3.8 (found version "3.8.13") found components: Interpreter -- Configuring done -- Generating done -- Build files have been written to: /workspace/code/FasterTransformer/build

之后,通過make使用12個線程去執(zhí)行編譯加快編譯速度:

make -j12

運(yùn)行過程:

[ 0%] Building CXX object src/fastertransformer/kernels/cutlass_kernels/CMakeFiles/cutlass_preprocessors.dir/cutlass_preprocessors.cc.o [ 0%] Building CXX object src/fastertransformer/utils/CMakeFiles/nvtx_utils.dir/nvtx_utils.cc.o [ 0%] Building CUDA object src/fastertransformer/kernels/CMakeFiles/layernorm_kernels.dir/layernorm_kernels.cu.o [ 0%] Building CXX object src/fastertransformer/utils/CMakeFiles/cuda_utils.dir/cuda_utils.cc.o [ 0%] Building CXX object src/fastertransformer/utils/CMakeFiles/logger.dir/logger.cc.o [ 1%] Building CXX object 3rdparty/common/CMakeFiles/cuda_driver_wrapper.dir/cudaDriverWrapper.cpp.o [ 1%] Building CUDA object src/fastertransformer/kernels/CMakeFiles/custom_ar_kernels.dir/custom_ar_kernels.cu.o [ 1%] Building CUDA object src/fastertransformer/kernels/CMakeFiles/add_residual_kernels.dir/add_residual_kernels.cu.o [ 1%] Building CUDA object src/fastertransformer/kernels/CMakeFiles/activation_kernels.dir/activation_kernels.cu.o [ 1%] Building CUDA object src/fastertransformer/kernels/CMakeFiles/transpose_int8_kernels.dir/transpose_int8_kernels.cu.o [ 2%] Building CUDA object src/fastertransformer/kernels/CMakeFiles/unfused_attention_kernels.dir/unfused_attention_kernels.cu.o [ 2%] Building CUDA object src/fastertransformer/kernels/CMakeFiles/bert_preprocess_kernels.dir/bert_preprocess_kernels.cu.o [ 2%] Linking CUDA device code CMakeFiles/cuda_driver_wrapper.dir/cmake_device_link.o [ 2%] Linking CXX static library ../../lib/libcuda_driver_wrapper.a [ 2%] Built target cuda_driver_wrapper ... [100%] Linking CXX executable ../../../bin/gptneox_example [100%] Built target gptj_triton_example [100%] Building CXX object examples/cpp/multi_gpu_gpt/CMakeFiles/multi_gpu_gpt_triton_example.dir/multi_gpu_gpt_triton_example.cc.o [100%] Built target gptj_example [100%] Building CXX object examples/cpp/multi_gpu_gpt/CMakeFiles/multi_gpu_gpt_interactive_example.dir/multi_gpu_gpt_interactive_example.cc.o [100%] Built target gptneox_example [100%] Linking CXX executable ../../../bin/multi_gpu_gpt_example [100%] Linking CXX executable ../../../bin/gptneox_triton_example [100%] Built target multi_gpu_gpt_example [100%] Built target gptneox_triton_example [100%] Linking CXX executable ../../../bin/multi_gpu_gpt_triton_example [100%] Linking CXX static library ../../../../lib/libth_t5.a [100%] Built target th_t5 [100%] Built target multi_gpu_gpt_triton_example [100%] Linking CXX executable ../../../bin/multi_gpu_gpt_async_example [100%] Linking CXX executable ../../../bin/multi_gpu_gpt_interactive_example [100%] Built target multi_gpu_gpt_async_example [100%] Linking CXX static library ../../../../lib/libth_parallel_gpt.a [100%] Built target th_parallel_gpt [100%] Linking CXX shared library ../../../lib/libth_transformer.so [100%] Built target multi_gpu_gpt_interactive_example [100%] Built target th_transformer

至此,構(gòu)建FasterTransformer完成。

安裝依賴包

安裝進(jìn)行模型推理所需要的依賴包。

cd /workspace/code/FasterTransformer pip install -r examples/pytorch/gpt/requirement.txt -i https://pypi.tuna.tsinghua.edu.cn/simple --trusted-host pypi.tuna.tsinghua.edu.cn

數(shù)據(jù)與模型準(zhǔn)備

模型

本文使用BLOOM模型進(jìn)行演示,它不需要學(xué)習(xí)位置編碼,并允許模型生成比訓(xùn)練中使用的序列長度更長的序列。BLOOM 也具有與 OpenAI GPT 相似的結(jié)構(gòu)。因此,像 OPT 一樣,F(xiàn)T 通過 GPT 類提供了 BLOOM 模型作為變體。用戶可以使用 examples/pytorch/gpt/utils/huggingface_bloom_convert.py 將預(yù)訓(xùn)練的 Huggingface BLOOM 模型轉(zhuǎn)換為 fastertransformer 文件格式。

我們使用bloomz-560m作為基礎(chǔ)模型。該模型是基于bloom-560m在xP3數(shù)據(jù)集上對多任務(wù)進(jìn)行了微調(diào)而得到的。

下載模型:

cd /workspace/model git lfs clone https://huggingface.co/bigscience/bloomz-560m

模型文件:

> ls -al bloomz-560m total 2198796 drwxr-xr-x 4 root root 4096 Apr 25 16:50 . drwxr-xr-x 4 root root 4096 Apr 26 07:06 .. drwxr-xr-x 9 root root 4096 Apr 25 16:53 .git -rw-r--r-- 1 root root 1489 Apr 25 16:50 .gitattributes -rw-r--r-- 1 root root 24778 Apr 25 16:50 README.md -rw-r--r-- 1 root root 715 Apr 25 16:50 config.json drwxr-xr-x 4 root root 4096 Apr 25 16:50 logs -rw-r--r-- 1 root root 1118459450 Apr 25 16:53 model.safetensors -rw-r--r-- 1 root root 1118530423 Apr 25 16:53 pytorch_model.bin -rw-r--r-- 1 root root 85 Apr 25 16:50 special_tokens_map.json -rw-r--r-- 1 root root 14500438 Apr 25 16:50 tokenizer.json -rw-r--r-- 1 root root 222 Apr 25 16:50 tokenizer_config.json

數(shù)據(jù)集

本文使用Lambada數(shù)據(jù)集,它是一個NLP(自然語言處理)任務(wù)中使用的數(shù)據(jù)集。它包含大量的英文句子,并要求模型去預(yù)測下一個單詞,這種任務(wù)稱為語言建模。Lambada數(shù)據(jù)集的特點(diǎn)是它的句子長度較長,并且包含更豐富的語義信息。因此,對于語言模型的評估來說是一個很好的測試數(shù)據(jù)集。

下載LAMBADA測試數(shù)據(jù)集。

cd /workspace/data wget -c https://github.com/cybertronai/bflm/raw/master/lambada_test.jsonl

數(shù)據(jù)格式如下:

{"text": "In my palm is a clear stone, and inside it is a small ivory statuette. A guardian angel.

"Figured if you're going to be out at night getting hit by cars, you might as well have some backup."

I look at him, feeling stunned. Like this is some sort of sign. But as I stare at Harlin, his mouth curved in a confident grin, I don't care about signs"}

{"text": "Give me a minute to change and I'll meet you at the docks." She'd forced those words through her teeth.

"No need to change. We won't be that long."

Shane gripped her arm and started leading her to the dock.

"I can make it there on my own, Shane"}

...

{"text": ""Only one source I know of that would be likely to cough up enough money to finance a phony sleep research facility and pay people big bucks to solve crimes in their dreams," Farrell concluded dryly.

"What can I say?" Ellis unfolded his arms and widened his hands. "Your tax dollars at work."

Before Farrell could respond, Leila's voice rose from inside the house.

"No insurance?" she wailed. "What do you mean you don't have any insurance"}

{"text": "Helen's heart broke a little in the face of Miss Mabel's selfless courage. She thought that because she was old, her life was of less value than the others'. For all Helen knew, Miss Mabel had a lot more years to live than she did. "Not going to happen," replied Helen"}

{"text": "Preston had been the last person to wear those chains, and I knew what I'd see and feel if they were slipped onto my skin-the Reaper's unending hatred of me. I'd felt enough of that emotion already in the amphitheater. I didn't want to feel anymore.

"Don't put those on me," I whispered. "Please."

Sergei looked at me, surprised by my low, raspy please, but he put down the chains"}

模型格式轉(zhuǎn)換

為了避免在模型并行時,拆分模型的額外工作,F(xiàn)asterTransformer 提供了一個工具,用于將模型從不同格式拆分和轉(zhuǎn)換為 FasterTransformer 二進(jìn)制文件格式;然后, FasterTransformer 可以直接以二進(jìn)制格式加載模型。

將Huggingface Transformer模型權(quán)重文件格式轉(zhuǎn)換成FasterTransformer格式。

cd /workspace/code/FasterTransformer

python examples/pytorch/gpt/utils/huggingface_bloom_convert.py

--input-dir /workspace/model/bloomz-560m

--output-dir /workspace/model/bloomz-560m-convert

--data-type fp16

-tp 1 -v

轉(zhuǎn)換過程:

python examples/pytorch/gpt/utils/huggingface_bloom_convert.py > --input-dir /workspace/model/bloomz-560m > --output-dir /workspace/model/bloomz-560m-convert > --data-type fp16 > -tp 1 -v ======================= Arguments ======================= - input_dir...........: /workspace/model/bloomz-560m - output_dir..........: /workspace/model/bloomz-560m-convert - tensor_para_size....: 1 - data_type...........: fp16 - processes...........: 1 - verbose.............: True - by_shard............: False ========================================================= loading from pytorch bin format model file num: 1 - model.wte.......................................: shape (250880, 1024) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.wte.bin - model.pre_decoder_layernorm.weight..............: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.pre_decoder_layernorm.weight.bin - model.pre_decoder_layernorm.bias................: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.pre_decoder_layernorm.bias.bin - model.layers.0.input_layernorm.weight...........: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.0.input_layernorm.weight.bin - model.layers.0.input_layernorm.bias.............: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.0.input_layernorm.bias.bin - model.layers.0.attention.query_key_value.weight.: shape (1024, 3, 1024) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.0.attention.query_key_value.weight.0.bin (0/1) - model.layers.0.attention.query_key_value.bias...: shape (3, 1024) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.0.attention.query_key_value.bias.0.bin (0/1) - model.layers.0.attention.dense.weight...........: shape (1024, 1024) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.0.attention.dense.weight.0.bin (0/1) - model.layers.0.attention.dense.bias.............: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.0.attention.dense.bias.bin - model.layers.0.post_attention_layernorm.weight..: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.0.post_attention_layernorm.weight.bin - model.layers.0.post_attention_layernorm.bias....: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.0.post_attention_layernorm.bias.bin - model.layers.0.mlp.dense_h_to_4h.weight.........: shape (1024, 4096) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.0.mlp.dense_h_to_4h.weight.0.bin (0/1) - model.layers.0.mlp.dense_h_to_4h.bias...........: shape (4096,) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.0.mlp.dense_h_to_4h.bias.0.bin (0/1) ... rs.22.mlp.dense_4h_to_h.bias.bin - model.layers.23.input_layernorm.weight..........: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.input_layernorm.weight.bin - model.layers.23.input_layernorm.bias............: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.input_layernorm.bias.bin - model.layers.23.attention.query_key_value.weight: shape (1024, 3, 1024) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.attention.query_key_value.weight.0.bin (0/1) - model.layers.23.attention.query_key_value.bias..: shape (3, 1024) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.attention.query_key_value.bias.0.bin (0/1) - model.layers.23.attention.dense.weight..........: shape (1024, 1024) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.attention.dense.weight.0.bin (0/1) - model.layers.23.attention.dense.bias............: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.attention.dense.bias.bin - model.layers.23.post_attention_layernorm.weight.: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.post_attention_layernorm.weight.bin - model.layers.23.post_attention_layernorm.bias...: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.post_attention_layernorm.bias.bin - model.layers.23.mlp.dense_h_to_4h.weight........: shape (1024, 4096) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.mlp.dense_h_to_4h.weight.0.bin (0/1) - model.layers.23.mlp.dense_h_to_4h.bias..........: shape (4096,) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.mlp.dense_h_to_4h.bias.0.bin (0/1) - model.layers.23.mlp.dense_4h_to_h.weight........: shape (4096, 1024) s | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.mlp.dense_4h_to_h.weight.0.bin (0/1) - model.layers.23.mlp.dense_4h_to_h.bias..........: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.layers.23.mlp.dense_4h_to_h.bias.bin - model.final_layernorm.weight....................: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.final_layernorm.weight.bin - model.final_layernorm.bias......................: shape (1024,) | saved at /workspace/model/bloomz-560m-convert/1-gpu/model.final_layernorm.bias.bin Checkpoint conversion (HF >> FT) has done (elapsed time: 17.07 sec)

轉(zhuǎn)換成FasterTransformer格式后的文件如下所示:

> tree bloomz-560m-convert/

bloomz-560m-convert/

└── 1-gpu

├── config.ini

├── model.final_layernorm.bias.bin

├── model.final_layernorm.weight.bin

├── model.layers.0.attention.dense.bias.bin

├── model.layers.0.attention.dense.weight.0.bin

├── model.layers.0.attention.query_key_value.bias.0.bin

├── model.layers.0.attention.query_key_value.weight.0.bin

├── model.layers.0.input_layernorm.bias.bin

├── model.layers.0.input_layernorm.weight.bin

├── model.layers.0.mlp.dense_4h_to_h.bias.bin

├── model.layers.0.mlp.dense_4h_to_h.weight.0.bin

├── model.layers.0.mlp.dense_h_to_4h.bias.0.bin

├── model.layers.0.mlp.dense_h_to_4h.weight.0.bin

├── model.layers.0.post_attention_layernorm.bias.bin

├── model.layers.0.post_attention_layernorm.weight.bin

├── model.layers.1.attention.dense.bias.bin

...

├── model.layers.8.post_attention_layernorm.weight.bin

├── model.layers.9.attention.dense.bias.bin

├── model.layers.9.attention.dense.weight.0.bin

├── model.layers.9.attention.query_key_value.bias.0.bin

├── model.layers.9.attention.query_key_value.weight.0.bin

├── model.layers.9.input_layernorm.bias.bin

├── model.layers.9.input_layernorm.weight.bin

├── model.layers.9.mlp.dense_4h_to_h.bias.bin

├── model.layers.9.mlp.dense_4h_to_h.weight.0.bin

├── model.layers.9.mlp.dense_h_to_4h.bias.0.bin

├── model.layers.9.mlp.dense_h_to_4h.weight.0.bin

├── model.layers.9.post_attention_layernorm.bias.bin

├── model.layers.9.post_attention_layernorm.weight.bin

├── model.pre_decoder_layernorm.bias.bin

├── model.pre_decoder_layernorm.weight.bin

└── model.wte.bin

模型基準(zhǔn)測試

下面使用官方提供的樣例進(jìn)行基準(zhǔn)測試對比下Huggingface Transformers和FasterTransformer的響應(yīng)時長。

Huggingface Transformers基準(zhǔn)測試

運(yùn)行命令:

# Run HF benchmark

CUDA_VISIBLE_DEVICES=1 python examples/pytorch/gpt/bloom_lambada.py

--tokenizer-path /workspace/model/bloomz-560m

--dataset-path /workspace/data/lambada_test.jsonl

--lib-path bulid/lib/libth_transformer.so

--test-hf

--show-progress

運(yùn)行過程:

python examples/pytorch/gpt/bloom_lambada.py > --tokenizer-path /workspace/model/bloomz-560m > --dataset-path /workspace/data/lambada_test.jsonl > --lib-path bulid/lib/libth_transformer.so > --test-hf > --show-progress =================== Arguments =================== - num_heads................: None - size_per_head............: None - inter_size...............: None - num_layers...............: None - vocab_size...............: None - tensor_para_size.........: 1 - pipeline_para_size.......: 1 - remove_padding...........: True - shared_contexts_ratio....: 1.0 - batch_size...............: 8 - output_length............: 32 - beam_width...............: 1 - top_k....................: 1 - top_p....................: 1.0 - temperature..............: 1.0 - len_penalty..............: 0.0 - beam_search_diversity_rate: 0.0 - start_id.................: 0 - end_id...................: 2 - repetition_penalty.......: 1.0 - random_seed..............: None - return_cum_log_probs.....: 0 - checkpoint_path..........: None - dataset_path.............: /workspace/data/lambada_test.jsonl - output_path..............: None - tokenizer_path...........: /workspace/model/bloomz-560m - lib_path.................: bulid/lib/libth_transformer.so - test_hf..................: True - acc_threshold............: None - show_progress............: True - inference_data_type......: None - weights_data_type........: None - int8_mode................: 0 ================================================= 100%|███████████████████████████████████████████████████████████████████████████████████████████████████████| 645/645 [02:33<00:00, 4.21it/s] Accuracy: 39.4722% (2034/5153) (elapsed time: 146.7230 sec)

FasterTransformer基準(zhǔn)測試

運(yùn)行命令:

# Run FT benchmark

python examples/pytorch/gpt/bloom_lambada.py

--checkpoint-path /workspace/model/bloomz-560m-convert/1-gpu

--tokenizer-path /workspace/model/bloomz-560m

--dataset-path /workspace/data/lambada_test.jsonl

--lib-path build/lib/libth_transformer.so

--show-progress

注:還可添加--data-type fp16以半精度方式加載模型,以減少模型對于顯存的消耗。

運(yùn)行過程:

python examples/pytorch/gpt/bloom_lambada.py > --checkpoint-path /workspace/model/bloomz-560m-convert/1-gpu > --tokenizer-path /workspace/model/bloomz-560m > --dataset-path /workspace/data/lambada_test.jsonl > --lib-path build/lib/libth_transformer.so > --show-progress =================== Arguments =================== - num_heads................: None - size_per_head............: None - inter_size...............: None - num_layers...............: None - vocab_size...............: None - tensor_para_size.........: 1 - pipeline_para_size.......: 1 - remove_padding...........: True - shared_contexts_ratio....: 1.0 - batch_size...............: 8 - output_length............: 32 - beam_width...............: 1 - top_k....................: 1 - top_p....................: 1.0 - temperature..............: 1.0 - len_penalty..............: 0.0 - beam_search_diversity_rate: 0.0 - start_id.................: 0 - end_id...................: 2 - repetition_penalty.......: 1.0 - random_seed..............: None - return_cum_log_probs.....: 0 - checkpoint_path..........: /workspace/model/bloomz-560m-convert/1-gpu - dataset_path.............: /workspace/data/lambada_test.jsonl - output_path..............: None - tokenizer_path...........: /workspace/model/bloomz-560m - lib_path.................: build/lib/libth_transformer.so - test_hf..................: False - acc_threshold............: None - show_progress............: True - inference_data_type......: None - weights_data_type........: None - int8_mode................: 0 ================================================= [FT][INFO] Load BLOOM model - head_num.................: 16 - size_per_head............: 64 - layer_num................: 24 - tensor_para_size.........: 1 - vocab_size...............: 250880 - start_id.................: 1 - end_id...................: 2 - weights_data_type........: fp16 - layernorm_eps............: 1e-05 - inference_data_type......: fp16 - lib_path.................: build/lib/libth_transformer.so - pipeline_para_size.......: 1 - shared_contexts_ratio....: 1.0 - int8_mode................: 0 [WARNING] gemm_config.in is not found; using default GEMM algo [FT][WARNING] Skip NCCL initialization since requested tensor/pipeline parallel sizes are equals to 1. [FT][INFO] Device NVIDIA A800 80GB PCIe 100%|███████████████████████████████████████████████████████████████████████████████████████████████████████| 645/645 [00:18<00:00, 34.58it/s] Accuracy: 39.4722% (2034/5153) (elapsed time: 13.0032 sec)

對比Huggingface Transformers和FasterTransformer

HF: Accuracy: 39.4722% (2034/5153) (elapsed time: 146.7230 sec) FT: Accuracy: 39.4722% (2034/5153) (elapsed time: 13.0032 sec)

可以看到它們的準(zhǔn)確率一致,但是FasterTransformer比Huggingface Transformers的推理速度更加快速。

模型并行推理(多卡)

對于像GPT3(175B)、OPT-175B這樣的大模型,單卡無法加載整個模型,因此,我們需要以分布式(模型并行)方式進(jìn)行大模型推理。模型并行推理有兩種方式:張量并行和流水線并行,前面已經(jīng)進(jìn)行過相應(yīng)的說明,這里不再贅述。

張量并行

模型轉(zhuǎn)換

如果想使用張量并行 (TP) 技術(shù)將模型拆分多個GPU進(jìn)行推理,可參考如下命令將模型轉(zhuǎn)換到2個GPU上進(jìn)行推理。

python examples/pytorch/gpt/utils/huggingface_bloom_convert.py --input-dir /workspace/model/bloomz-560m --output-dir /workspace/model/bloomz-560m-convert --data-type fp16 -tp 2 -v

轉(zhuǎn)換成張量并行度為2的FasterTransformer格式后的文件如下所示:

tree /workspace/model/bloomz-560m-convert/2-gpu /workspace/model/bloomz-560m-convert/2-gpu ├── config.ini ├── model.final_layernorm.bias.bin ├── model.final_layernorm.weight.bin ├── model.layers.0.attention.dense.bias.bin ├── model.layers.0.attention.dense.weight.0.bin ├── model.layers.0.attention.dense.weight.1.bin ├── model.layers.0.attention.query_key_value.bias.0.bin ├── model.layers.0.attention.query_key_value.bias.1.bin ├── model.layers.0.attention.query_key_value.weight.0.bin ├── model.layers.0.attention.query_key_value.weight.1.bin ├── model.layers.0.input_layernorm.bias.bin ├── model.layers.0.input_layernorm.weight.bin ├── model.layers.0.mlp.dense_4h_to_h.bias.bin ├── model.layers.0.mlp.dense_4h_to_h.weight.0.bin ├── model.layers.0.mlp.dense_4h_to_h.weight.1.bin ├── model.layers.0.mlp.dense_h_to_4h.bias.0.bin ├── model.layers.0.mlp.dense_h_to_4h.bias.1.bin ├── model.layers.0.mlp.dense_h_to_4h.weight.0.bin ├── model.layers.0.mlp.dense_h_to_4h.weight.1.bin ├── model.layers.0.post_attention_layernorm.bias.bin ├── model.layers.0.post_attention_layernorm.weight.bin ... ├── model.layers.9.attention.dense.bias.bin ├── model.layers.9.attention.dense.weight.0.bin ├── model.layers.9.attention.dense.weight.1.bin ├── model.layers.9.attention.query_key_value.bias.0.bin ├── model.layers.9.attention.query_key_value.bias.1.bin ├── model.layers.9.attention.query_key_value.weight.0.bin ├── model.layers.9.attention.query_key_value.weight.1.bin ├── model.layers.9.input_layernorm.bias.bin ├── model.layers.9.input_layernorm.weight.bin ├── model.layers.9.mlp.dense_4h_to_h.bias.bin ├── model.layers.9.mlp.dense_4h_to_h.weight.0.bin ├── model.layers.9.mlp.dense_4h_to_h.weight.1.bin ├── model.layers.9.mlp.dense_h_to_4h.bias.0.bin ├── model.layers.9.mlp.dense_h_to_4h.bias.1.bin ├── model.layers.9.mlp.dense_h_to_4h.weight.0.bin ├── model.layers.9.mlp.dense_h_to_4h.weight.1.bin ├── model.layers.9.post_attention_layernorm.bias.bin ├── model.layers.9.post_attention_layernorm.weight.bin ├── model.pre_decoder_layernorm.bias.bin ├── model.pre_decoder_layernorm.weight.bin └── model.wte.bin 0 directories, 438 files

張量并行模型推理

運(yùn)行命令:

mpirun -n 2 --allow-run-as-root python examples/pytorch/gpt/bloom_lambada.py

--checkpoint-path /workspace/model/bloomz-560m-convert/2-gpu

--tokenizer-path /workspace/model/bloomz-560m

--dataset-path /workspace/data/lambada_test.jsonl

--lib-path build/lib/libth_transformer.so

--tensor-para-size 2

--pipeline-para-size 1

--show-progress

運(yùn)行過程:

mpirun -n 2 --allow-run-as-root python examples/pytorch/gpt/bloom_lambada.py > --checkpoint-path /workspace/model/bloomz-560m-convert/2-gpu > --tokenizer-path /workspace/model/bloomz-560m > --dataset-path /workspace/data/lambada_test.jsonl > --lib-path build/lib/libth_transformer.so > --tensor-para-size 2 > --pipeline-para-size 1 > --show-progress =================== Arguments =================== - num_heads................: None - size_per_head............: None - inter_size...............: None - num_layers...............: None - vocab_size...............: None - tensor_para_size.........: 2 - pipeline_para_size.......: 1 - remove_padding...........: True - shared_contexts_ratio....: 1.0 - batch_size...............: 8 - output_length............: 32 - beam_width...............: 1 - top_k....................: 1 - top_p....................: 1.0 - temperature..............: 1.0 - len_penalty..............: 0.0 - beam_search_diversity_rate: 0.0 - start_id.................: 0 - end_id...................: 2 - repetition_penalty.......: 1.0 - random_seed..............: None - return_cum_log_probs.....: 0 - checkpoint_path..........: /workspace/model/bloomz-560m-convert/2-gpu - dataset_path.............: /workspace/data/lambada_test.jsonl - output_path..............: None - tokenizer_path...........: /workspace/model/bloomz-560m - lib_path.................: build/lib/libth_transformer.so - test_hf..................: False - acc_threshold............: None - show_progress............: True - inference_data_type......: None - weights_data_type........: None - int8_mode................: 0 ================================================= =================== Arguments =================== - num_heads................: None - size_per_head............: None - inter_size...............: None - num_layers...............: None - vocab_size...............: None - tensor_para_size.........: 2 - pipeline_para_size.......: 1 - remove_padding...........: True - shared_contexts_ratio....: 1.0 - batch_size...............: 8 - output_length............: 32 - beam_width...............: 1 - top_k....................: 1 - top_p....................: 1.0 - temperature..............: 1.0 - len_penalty..............: 0.0 - beam_search_diversity_rate: 0.0 - start_id.................: 0 - end_id...................: 2 - repetition_penalty.......: 1.0 - random_seed..............: None - return_cum_log_probs.....: 0 - checkpoint_path..........: /workspace/model/bloomz-560m-convert/2-gpu - dataset_path.............: /workspace/data/lambada_test.jsonl - output_path..............: None - tokenizer_path...........: /workspace/model/bloomz-560m - lib_path.................: build/lib/libth_transformer.so - test_hf..................: False - acc_threshold............: None - show_progress............: True - inference_data_type......: None - weights_data_type........: None - int8_mode................: 0 ================================================= [FT][INFO] Load BLOOM model - head_num.................: 16 - size_per_head............: 64 - layer_num................: 24 - tensor_para_size.........: 2 - vocab_size...............: 250880 - start_id.................: 1 - end_id...................: 2 - weights_data_type........: fp16 - layernorm_eps............: 1e-05 - inference_data_type......: fp16 - lib_path.................: build/lib/libth_transformer.so - pipeline_para_size.......: 1 - shared_contexts_ratio....: 1.0 - int8_mode................: 0 [FT][INFO] Load BLOOM model - head_num.................: 16 - size_per_head............: 64 - layer_num................: 24 - tensor_para_size.........: 2 - vocab_size...............: 250880 - start_id.................: 1 - end_id...................: 2 - weights_data_type........: fp16 - layernorm_eps............: 1e-05 - inference_data_type......: fp16 - lib_path.................: build/lib/libth_transformer.so - pipeline_para_size.......: 1 - shared_contexts_ratio....: 1.0 - int8_mode................: 0 world_size: 2 world_size: 2 [WARNING] gemm_config.in is not found; using default GEMM algo [WARNING] gemm_config.in is not found; using default GEMM algo [FT][INFO] NCCL initialized rank=0 world_size=2 tensor_para=NcclParam[rank=0, world_size=2, nccl_comm=0x5556305627d0] pipeline_para=NcclParam[rank=0, world_size=1, nccl_comm=0x5556305d5d20] [FT][INFO] Device NVIDIA A800 80GB PCIe [FT][INFO] NCCL initialized rank=1 world_size=2 tensor_para=NcclParam[rank=1, world_size=2, nccl_comm=0x55b9600a9ca0] pipeline_para=NcclParam[rank=0, world_size=1, nccl_comm=0x55b96011cff0] [FT][INFO] Device NVIDIA A800 80GB PCIe /workspace/code/FasterTransformer/examples/pytorch/gpt/utils/gpt.py SyntaxWarning: assertion is always true, perhaps remove parentheses? assert(self.pre_embed_idx < self.post_embed_idx, "Pre decoder embedding index should be lower than post decoder embedding index.") 0%| | 0/645 [00:00

流水線并行

模型轉(zhuǎn)換

如果僅使用流水線并行,不使用張量并行,則tp設(shè)置為1即可,如果需要同時進(jìn)行張量并行和流水線并行,則需要將tp設(shè)置成張量并行度大小。具體命令參考前面的模型轉(zhuǎn)換部分。

流水線并行模型推理

運(yùn)行命令:

CUDA_VISIBLE_DEVICES=1,2 mpirun -n 2 --allow-run-as-root python examples/pytorch/gpt/bloom_lambada.py --checkpoint-path /workspace/model/bloomz-560m-convert/1-gpu --tokenizer-path /workspace/model/bloomz-560m --dataset-path /workspace/data/lambada_test.jsonl --lib-path build/lib/libth_transformer.so --tensor-para-size 1 --pipeline-para-size 2 --batch-size 1 --show-progress

運(yùn)行過程:

CUDA_VISIBLE_DEVICES=1,2 mpirun -n 2 --allow-run-as-root python examples/pytorch/gpt/bloom_lambada.py > --checkpoint-path /workspace/model/bloomz-560m-convert/1-gpu > --tokenizer-path /workspace/model/bloomz-560m > --dataset-path /workspace/data/lambada_test.jsonl > --lib-path build/lib/libth_transformer.so > --tensor-para-size 1 > --pipeline-para-size 2 > --batch-size 1 > --show-progress =================== Arguments =================== - num_heads................: None - size_per_head............: None - inter_size...............: None - num_layers...............: None - vocab_size...............: None - tensor_para_size.........: 1 - pipeline_para_size.......: 2 - remove_padding...........: True - shared_contexts_ratio....: 1.0 - batch_size...............: 1 - output_length............: 32 - beam_width...............: 1 - top_k....................: 1 - top_p....................: 1.0 - temperature..............: 1.0 - len_penalty..............: 0.0 - beam_search_diversity_rate: 0.0 - start_id.................: 0 - end_id...................: 2 - repetition_penalty.......: 1.0 - random_seed..............: None - return_cum_log_probs.....: 0 - checkpoint_path..........: /workspace/model/bloomz-560m-convert/1-gpu - dataset_path.............: /workspace/data/lambada_test.jsonl - output_path..............: None - tokenizer_path...........: /workspace/model/bloomz-560m - lib_path.................: build/lib/libth_transformer.so - test_hf..................: False - acc_threshold............: None - show_progress............: True - inference_data_type......: None - weights_data_type........: None - int8_mode................: 0 ================================================= =================== Arguments =================== - num_heads................: None - size_per_head............: None - inter_size...............: None - num_layers...............: None - vocab_size...............: None - tensor_para_size.........: 1 - pipeline_para_size.......: 2 - remove_padding...........: True - shared_contexts_ratio....: 1.0 - batch_size...............: 1 - output_length............: 32 - beam_width...............: 1 - top_k....................: 1 - top_p....................: 1.0 - temperature..............: 1.0 - len_penalty..............: 0.0 - beam_search_diversity_rate: 0.0 - start_id.................: 0 - end_id...................: 2 - repetition_penalty.......: 1.0 - random_seed..............: None - return_cum_log_probs.....: 0 - checkpoint_path..........: /workspace/model/bloomz-560m-convert/1-gpu - dataset_path.............: /workspace/data/lambada_test.jsonl - output_path..............: None - tokenizer_path...........: /workspace/model/bloomz-560m - lib_path.................: build/lib/libth_transformer.so - test_hf..................: False - acc_threshold............: None - show_progress............: True - inference_data_type......: None - weights_data_type........: None - int8_mode................: 0 ================================================= [FT][INFO] Load BLOOM model - head_num.................: 16 - size_per_head............: 64 - layer_num................: 24 - tensor_para_size.........: 1 - vocab_size...............: 250880 - start_id.................: 1 - end_id...................: 2 - weights_data_type........: fp16 - layernorm_eps............: 1e-05 - inference_data_type......: fp16 - lib_path.................: build/lib/libth_transformer.so - pipeline_para_size.......: 2 - shared_contexts_ratio....: 1.0 - int8_mode................: 0 [FT][INFO] Load BLOOM model - head_num.................: 16 - size_per_head............: 64 - layer_num................: 24 - tensor_para_size.........: 1 - vocab_size...............: 250880 - start_id.................: 1 - end_id...................: 2 - weights_data_type........: fp16 - layernorm_eps............: 1e-05 - inference_data_type......: fp16 - lib_path.................: build/lib/libth_transformer.so - pipeline_para_size.......: 2 - shared_contexts_ratio....: 1.0 - int8_mode................: 0 world_size: 2 world_size: 2 [WARNING] gemm_config.in is not found; using default GEMM algo [WARNING] gemm_config.in is not found; using default GEMM algo [FT][INFO] NCCL initialized rank=0 world_size=2 tensor_para=NcclParam[rank=0, world_size=1, nccl_comm=0x5557a53dc1b0] pipeline_para=NcclParam[rank=0, world_size=2, nccl_comm=0x5557a5444df0] [FT][INFO] NCCL initialized rank=1 world_size=2 tensor_para=NcclParam[rank=0, world_size=1, nccl_comm=0x560cf3452820] pipeline_para=NcclParam[rank=1, world_size=2, nccl_comm=0x560cf34bb190] [FT][INFO] Device NVIDIA A800 80GB PCIe [FT][INFO] Device NVIDIA A800 80GB PCIe 100%|██████████| 5153/5153 [01:51<00:00, 46.12it/s] current process id: 47861 Accuracy: 39.4527% (2033/5153) (elapsed time: 102.1145 sec) current process id: 47862 Accuracy: 39.4527% (2033/5153) (elapsed time: 102.3391 sec)

單卡、流水線并行、張量并行對比

下面在BatchSize為1的情況下,對單卡、張量并行、流水線并行進(jìn)行了簡單的測試,僅供參考(由于測試時,有其他訓(xùn)練任務(wù)也在運(yùn)行,可能對結(jié)果會產(chǎn)生干擾)。

TP=1、PP=1、BZ=1:

累積響應(yīng)時長: 100%|█████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 5153/5153 [02:21<00:00, 36.31it/s] current process id: 47645 Accuracy: 39.4527% (2033/5153) (elapsed time: 132.2274 sec) 顯存占用: +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | 0 N/A N/A 8356 C python 1740MiB | +-----------------------------------------------------------------------------+

TP=2、PP=1、BZ=1:

累積響應(yīng)時長: 100%|██████████| 5153/5153 [00:35<00:00, 144.80it/s]current process id: 49111 Accuracy: 39.4916% (2035/5153) (elapsed time: 26.1384 sec) current process id: 49112 Accuracy: 39.4916% (2035/5153) (elapsed time: 26.5110 sec) 顯存占用: +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | 1 N/A N/A 41339 C python 1692MiB | | 2 N/A N/A 41340 C python 1692MiB | +-----------------------------------------------------------------------------+

TP=1、PP=2、BZ=1:

累積響應(yīng)時長: 100%|██████████| 5153/5153 [00:33<00:00, 153.92it/s]current process id: 48755 Accuracy: 39.4527% (2033/5153) (elapsed time: 24.1695 sec) current process id: 48754 Accuracy: 39.4527% (2033/5153) (elapsed time: 24.4391 sec) 顯存占用: +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | 1 N/A N/A 4001 C python 1952MiB | | 2 N/A N/A 4002 C python 1952MiB | +-----------------------------------------------------------------------------+

TP=1、PP=3、BZ=1:

累積響應(yīng)時長: 100%|██████████| 5153/5153 [00:33<00:00, 152.46it/s]current process id: 48220 Accuracy: 0.0000% (0/5153) (elapsed time: 24.9212 sec) 100%|██████████| 5153/5153 [00:33<00:00, 153.63it/s]current process id: 48219 Accuracy: 39.4527% (2033/5153) (elapsed time: 24.9767 sec) current process id: 48221 Accuracy: 39.4527% (2033/5153) (elapsed time: 24.3489 sec) 顯存占用: +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | 0 N/A N/A 57588 C python 1420MiB | | 1 N/A N/A 57589 C python 1468MiB | | 2 N/A N/A 57590 C python 1468MiB | +-----------------------------------------------------------------------------+

結(jié)語

本文給大家簡要介紹了FasterTransformer的基本概念以及如何使用FasterTransformer進(jìn)行單機(jī)及分布式模型推理,希望能夠幫助大家快速了解FasterTransformer。

審核編輯:劉清

-

解碼器

+關(guān)注

關(guān)注

9文章

1143瀏覽量

40720 -

編碼器

+關(guān)注

關(guān)注

45文章

3639瀏覽量

134435 -

NVIDIA

+關(guān)注

關(guān)注

14文章

4981瀏覽量

102996 -

GPT

+關(guān)注

關(guān)注

0文章

354瀏覽量

15345 -

ChatGPT

+關(guān)注

關(guān)注

29文章

1560瀏覽量

7599

發(fā)布評論請先 登錄

相關(guān)推薦

針對Arm嵌入式設(shè)備優(yōu)化的神經(jīng)網(wǎng)絡(luò)推理引擎

壓縮模型會加速推理嗎?

如何用PyArmNN加速樹莓派上的ML推理

HarmonyOS:使用MindSpore Lite引擎進(jìn)行模型推理

海思AI芯片(Hi3519A/3559A)方案學(xué)習(xí)(十五)基于nnie引擎進(jìn)行推理的仿真代碼淺析

如何在推理引擎中脫穎而出

NVIDIA FasterTransformer庫的概述及好處

使用推理服務(wù)器加速大型Transformer模型的推理

在推理引擎中去除TOPS的頂部

總結(jié)FasterTransformer Encoder(BERT)的cuda相關(guān)優(yōu)化技巧

總結(jié)FasterTransformer Encoder優(yōu)化技巧

淺析推理加速引擎FasterTransformer

淺析推理加速引擎FasterTransformer

評論