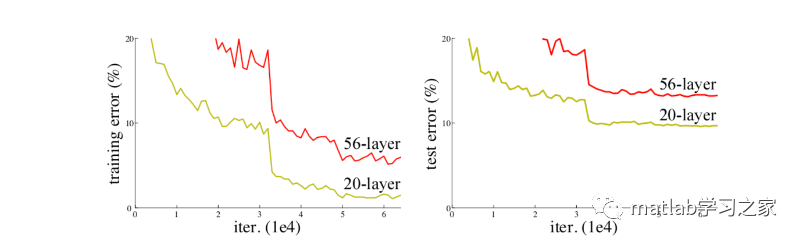

我們都知道在CNN網絡中,輸入的是圖片的矩陣,也是最基本的特征,整個CNN網絡就是一個信息提取的過程,從底層的特征逐漸抽取到高度抽象的特征,網絡的層數越多也就意味這能夠提取到的不同級別的抽象特征更加豐富,并且越深的網絡提取的特征越抽象,就越具有語義信息。但神經網絡越深真的越好嗎?我們可以看下面一張圖片,圖中描述了不同深度的傳統神經網絡效果對比圖,顯然神經網絡越深效果不一定好。

對于傳統CNN網絡,網絡深度的增加,容易導致梯度消失和爆炸。針對梯度消失和爆炸的解決方法一般是正則初始化和中間的正則化層,但是這會導致另一個問題,退化問題,隨著網絡層數的增加,在訓練集上的準確率卻飽和甚至下降了。為此,殘差神經網絡應運而生。

一、算法原理

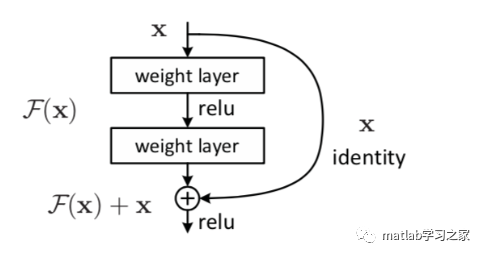

殘差網絡通過加入 shortcut connections,變得更加容易被優化。包含一個 shortcut connection 的幾層網絡被稱為一個殘差塊(residual block),如下圖所示。

普通的平原網絡與深度殘差網絡的最大區別在于,深度殘差網絡有很多旁路的支線將輸入直接連到后面的層,使得后面的層可以直接學習殘差,這些支路就叫做shortcut。傳統的卷積層或全連接層在信息傳遞時,或多或少會存在信息丟失、損耗等問題。ResNet 在某種程度上解決了這個問題,通過直接將輸入信息繞道傳到輸出,保護信息的完整性,整個網絡則只需要學習輸入、輸出差別的那一部分,簡化學習目標和難度。

二、代碼實戰

構建19層ResNet網絡,以負荷預測為例

%%

clc

clear

close all

load Train.mat

% load Test.mat

Train.weekend = dummyvar(Train.weekend);

Train.month = dummyvar(Train.month);

Train = movevars(Train,{'weekend','month'},'After','demandLag');

Train.ts = [];

Train(1,:) =[];

y = Train.demand;

x = Train{:,2:5};

[xnorm,xopt] = mapminmax(x',0,1);

[ynorm,yopt] = mapminmax(y',0,1);

xnorm = xnorm(:,1:1000);

ynorm = ynorm(1:1000);

k = 24; % 滯后長度

% 轉換成2-D image

for i = 1:length(ynorm)-k

Train_xNorm{:,i} = xnorm(:,i:i+k-1);

Train_yNorm(i) = ynorm(i+k-1);

Train_y{i} = y(i+k-1);

end

Train_x = Train_xNorm';

ytest = Train.demand(1001:1170);

xtest = Train{1001:1170,2:5};

[xtestnorm] = mapminmax('apply', xtest',xopt);

[ytestnorm] = mapminmax('apply',ytest',yopt);

% xtestnorm = [xtestnorm; Train.weekend(1001:1170,:)'; Train.month(1001:1170,:)'];

xtest = xtest';

for i = 1:length(ytestnorm)-k

Test_xNorm{:,i} = xtestnorm(:,i:i+k-1);

Test_yNorm(i) = ytestnorm(i+k-1);

Test_y(i) = ytest(i+k-1);

end

Test_x = Test_xNorm';

x_train = table(Train_x,Train_y');

x_test = table(Test_x);

%% 訓練集和驗證集劃分

% TrainSampleLength = length(Train_yNorm);

% validatasize = floor(TrainSampleLength * 0.1);

% Validata_xNorm = Train_xNorm(:,end - validatasize:end,:);

% Validata_yNorm = Train_yNorm(:,TrainSampleLength-validatasize:end);

% Validata_y = Train_y(TrainSampleLength-validatasize:end);

%

% Train_xNorm = Train_xNorm(:,1:end-validatasize,:);

% Train_yNorm = Train_yNorm(:,1:end-validatasize);

% Train_y = Train_y(1:end-validatasize);

%% 構建殘差神經網絡

lgraph = layerGraph();

tempLayers = [

imageInputLayer([4 24],"Name","imageinput")

convolution2dLayer([3 3],32,"Name","conv","Padding","same")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

batchNormalizationLayer("Name","batchnorm")

reluLayer("Name","relu")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

additionLayer(2,"Name","addition")

convolution2dLayer([3 3],32,"Name","conv_1","Padding","same")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

batchNormalizationLayer("Name","batchnorm_1")

reluLayer("Name","relu_1")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

additionLayer(2,"Name","addition_1")

convolution2dLayer([3 3],32,"Name","conv_2","Padding","same")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

batchNormalizationLayer("Name","batchnorm_2")

reluLayer("Name","relu_2")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

additionLayer(2,"Name","addition_2")

convolution2dLayer([3 3],32,"Name","conv_3","Padding","same")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

batchNormalizationLayer("Name","batchnorm_3")

reluLayer("Name","relu_3")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

additionLayer(2,"Name","addition_3")

fullyConnectedLayer(1,"Name","fc")

regressionLayer("Name","regressionoutput")];

lgraph = addLayers(lgraph,tempLayers);

% 清理輔助變量

clear tempLayers;

lgraph = connectLayers(lgraph,"conv","batchnorm");

lgraph = connectLayers(lgraph,"conv","addition/in2");

lgraph = connectLayers(lgraph,"relu","addition/in1");

lgraph = connectLayers(lgraph,"conv_1","batchnorm_1");

lgraph = connectLayers(lgraph,"conv_1","addition_1/in2");

lgraph = connectLayers(lgraph,"relu_1","addition_1/in1");

lgraph = connectLayers(lgraph,"conv_2","batchnorm_2");

lgraph = connectLayers(lgraph,"conv_2","addition_2/in2");

lgraph = connectLayers(lgraph,"relu_2","addition_2/in1");

lgraph = connectLayers(lgraph,"conv_3","batchnorm_3");

lgraph = connectLayers(lgraph,"conv_3","addition_3/in2");

lgraph = connectLayers(lgraph,"relu_3","addition_3/in1");

plot(lgraph);

analyzeNetwork(lgraph);

%% 設置網絡參數

maxEpochs = 60;

miniBatchSize = 20;

options = trainingOptions('adam', ...

'MaxEpochs',maxEpochs, ...

'MiniBatchSize',miniBatchSize, ...

'InitialLearnRate',0.01, ...

'GradientThreshold',1, ...

'Shuffle','never', ...

'Plots','training-progress',...

'Verbose',0);

net = trainNetwork(x_train,lgraph ,options);

Predict_yNorm = predict(net,x_test);

Predict_y = double(Predict_yNorm)

plot(Test_y)

hold on

plot(Predict_y)

legend('真實值','預測值')

MATLAB殘差神經網絡設計

MATLAB殘差神經網絡設計