正如我們在前面的第 19.2 節中看到的那樣,由于超參數配置的評估代價高昂,我們可能不得不等待數小時甚至數天才能在隨機搜索返回良好的超參數配置之前。在實踐中,我們經常訪問資源池,例如同一臺機器上的多個 GPU 或具有單個 GPU 的多臺機器。這就引出了一個問題:我們如何有效地分布隨機搜索?

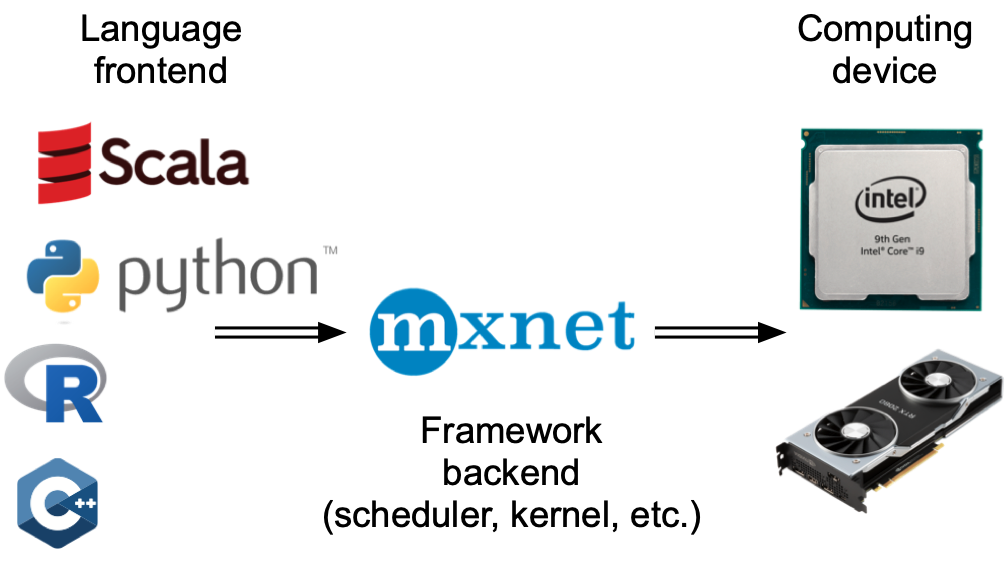

通常,我們區分同步和異步并行超參數優化(見圖19.3.1)。在同步設置中,我們等待所有并發運行的試驗完成,然后再開始下一批。考慮包含超參數的配置空間,例如過濾器的數量或深度神經網絡的層數。包含更多過濾器層數的超參數配置自然會花費更多時間完成,并且同一批次中的所有其他試驗都必須在同步點(圖 19.3.1 中的灰色區域)等待,然后我們才能 繼續優化過程。

在異步設置中,我們會在資源可用時立即安排新的試驗。這將以最佳方式利用我們的資源,因為我們可以避免任何同步開銷。對于隨機搜索,每個新的超參數配置都是獨立于所有其他配置選擇的,特別是沒有利用任何先前評估的觀察結果。這意味著我們可以簡單地異步并行化隨機搜索。這對于根據先前的觀察做出決定的更復雜的方法來說并不是直截了當的(參見 第 19.5 節)。雖然我們需要訪問比順序設置更多的資源,但異步隨機搜索表現出線性加速,因為達到了一定的性能K如果K試驗可以并行進行。

圖 19.3.1同步或異步分配超參數優化過程。與順序設置相比,我們可以減少整體掛鐘時間,同時保持總計算量不變。在掉隊的情況下,同步調度可能會導致工人閑置。

在本筆記本中,我們將研究異步隨機搜索,其中試驗在同一臺機器上的多個 Python 進程中執行。分布式作業調度和執行很難從頭開始實現。我們將使用Syne Tune (Salinas等人,2022 年),它為我們提供了一個簡單的異步 HPO 接口。Syne Tune 旨在與不同的執行后端一起運行,歡迎感興趣的讀者研究其簡單的 API,以了解有關分布式 HPO 的更多信息。

import logging from d2l import torch as d2l logging.basicConfig(level=logging.INFO) from syne_tune import StoppingCriterion, Tuner from syne_tune.backend.python_backend import PythonBackend from syne_tune.config_space import loguniform, randint from syne_tune.experiments import load_experiment from syne_tune.optimizer.baselines import RandomSearch

INFO:root:SageMakerBackend is not imported since dependencies are missing. You can install them with pip install 'syne-tune[extra]' AWS dependencies are not imported since dependencies are missing. You can install them with pip install 'syne-tune[aws]' or (for everything) pip install 'syne-tune[extra]' AWS dependencies are not imported since dependencies are missing. You can install them with pip install 'syne-tune[aws]' or (for everything) pip install 'syne-tune[extra]' INFO:root:Ray Tune schedulers and searchers are not imported since dependencies are missing. You can install them with pip install 'syne-tune[raytune]' or (for everything) pip install 'syne-tune[extra]'

19.3.1。目標函數

首先,我們必須定義一個新的目標函數,以便它現在通過回調將性能返回給 Syne Tune report。

def hpo_objective_lenet_synetune(learning_rate, batch_size, max_epochs): from syne_tune import Reporter from d2l import torch as d2l model = d2l.LeNet(lr=learning_rate, num_classes=10) trainer = d2l.HPOTrainer(max_epochs=1, num_gpus=1) data = d2l.FashionMNIST(batch_size=batch_size) model.apply_init([next(iter(data.get_dataloader(True)))[0]], d2l.init_cnn) report = Reporter() for epoch in range(1, max_epochs + 1): if epoch == 1: # Initialize the state of Trainer trainer.fit(model=model, data=data) else: trainer.fit_epoch() validation_error = trainer.validation_error().cpu().detach().numpy() report(epoch=epoch, validation_error=float(validation_error))

請注意,PythonBackendSyne Tune 需要在函數定義中導入依賴項。

19.3.2。異步調度器

首先,我們定義同時評估試驗的工人數量。我們還需要通過定義總掛鐘時間的上限來指定我們想要運行隨機搜索的時間。

n_workers = 2 # Needs to be <= the number of available GPUs max_wallclock_time = 12 * 60 # 12 minutes

接下來,我們說明要優化的指標以及我們是要最小化還是最大化該指標。即,metric需要對應傳遞給回調的參數名稱report。

mode = "min" metric = "validation_error"

我們使用前面示例中的配置空間。在 Syne Tune 中,該字典也可用于將常量屬性傳遞給訓練腳本。我們利用此功能以通過 max_epochs. 此外,我們指定要在 中評估的第一個配置initial_config。

config_space = {

"learning_rate": loguniform(1e-2, 1),

"batch_size": randint(32, 256),

"max_epochs": 10,

}

initial_config = {

"learning_rate": 0.1,

"batch_size": 128,

}

接下來,我們需要指定作業執行的后端。這里我們只考慮本地機器上的分布,其中并行作業作為子進程執行。但是,對于大規模 HPO,我們也可以在集群或云環境中運行它,其中每個試驗都會消耗一個完整的實例。

trial_backend = PythonBackend( tune_function=hpo_objective_lenet_synetune, config_space=config_space, )

BasicScheduler我們現在可以為異步隨機搜索創建調度程序,其行為與我們在 第 19.2 節中的類似。

scheduler = RandomSearch( config_space, metric=metric, mode=mode, points_to_evaluate=[initial_config], )

INFO:syne_tune.optimizer.schedulers.fifo:max_resource_level = 10, as inferred from config_space INFO:syne_tune.optimizer.schedulers.fifo:Master random_seed = 4033665588

Syne Tune 還具有一個Tuner,其中主要的實驗循環和簿記是集中的,調度程序和后端之間的交互是中介的。

stop_criterion = StoppingCriterion(max_wallclock_time=max_wallclock_time) tuner = Tuner( trial_backend=trial_backend, scheduler=scheduler, stop_criterion=stop_criterion, n_workers=n_workers, print_update_interval=int(max_wallclock_time * 0.6), )

讓我們運行我們的分布式 HPO 實驗。根據我們的停止標準,它將運行大約 12 分鐘。

tuner.run()

INFO:syne_tune.tuner:results of trials will be saved on /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691 INFO:root:Detected 8 GPUs INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.1 --batch_size 128 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/0/checkpoints INFO:syne_tune.tuner:(trial 0) - scheduled config {'learning_rate': 0.1, 'batch_size': 128, 'max_epochs': 10} INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.31642002803324326 --batch_size 52 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/1/checkpoints INFO:syne_tune.tuner:(trial 1) - scheduled config {'learning_rate': 0.31642002803324326, 'batch_size': 52, 'max_epochs': 10} INFO:syne_tune.tuner:Trial trial_id 0 completed. INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.045813161553582046 --batch_size 71 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/2/checkpoints INFO:syne_tune.tuner:(trial 2) - scheduled config {'learning_rate': 0.045813161553582046, 'batch_size': 71, 'max_epochs': 10} INFO:syne_tune.tuner:Trial trial_id 1 completed. INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.11375402103945391 --batch_size 244 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/3/checkpoints INFO:syne_tune.tuner:(trial 3) - scheduled config {'learning_rate': 0.11375402103945391, 'batch_size': 244, 'max_epochs': 10} INFO:syne_tune.tuner:Trial trial_id 2 completed. INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.5211657199736571 --batch_size 47 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/4/checkpoints INFO:syne_tune.tuner:(trial 4) - scheduled config {'learning_rate': 0.5211657199736571, 'batch_size': 47, 'max_epochs': 10} INFO:syne_tune.tuner:Trial trial_id 3 completed. INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.05259930532982774 --batch_size 181 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/5/checkpoints INFO:syne_tune.tuner:(trial 5) - scheduled config {'learning_rate': 0.05259930532982774, 'batch_size': 181, 'max_epochs': 10} INFO:syne_tune.tuner:Trial trial_id 5 completed. INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.09086002421630578 --batch_size 48 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/6/checkpoints INFO:syne_tune.tuner:(trial 6) - scheduled config {'learning_rate': 0.09086002421630578, 'batch_size': 48, 'max_epochs': 10} INFO:syne_tune.tuner:tuning status (last metric is reported) trial_id status iter learning_rate batch_size max_epochs epoch validation_error worker-time 0 Completed 10 0.100000 128 10 10 0.258109 108.366785 1 Completed 10 0.316420 52 10 10 0.146223 179.660365 2 Completed 10 0.045813 71 10 10 0.311251 143.567631 3 Completed 10 0.113754 244 10 10 0.336094 90.168444 4 InProgress 8 0.521166 47 10 8 0.150257 156.696658 5 Completed 10 0.052599 181 10 10 0.399893 91.044401 6 InProgress 2 0.090860 48 10 2 0.453050 36.693606 2 trials running, 5 finished (5 until the end), 436.55s wallclock-time INFO:syne_tune.tuner:Trial trial_id 4 completed. INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.03542833641356924 --batch_size 94 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/7/checkpoints INFO:syne_tune.tuner:(trial 7) - scheduled config {'learning_rate': 0.03542833641356924, 'batch_size': 94, 'max_epochs': 10} INFO:syne_tune.tuner:Trial trial_id 6 completed. INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.5941192130206245 --batch_size 149 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/8/checkpoints INFO:syne_tune.tuner:(trial 8) - scheduled config {'learning_rate': 0.5941192130206245, 'batch_size': 149, 'max_epochs': 10} INFO:syne_tune.tuner:Trial trial_id 7 completed. INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.013696247675312455 --batch_size 135 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/9/checkpoints INFO:syne_tune.tuner:(trial 9) - scheduled config {'learning_rate': 0.013696247675312455, 'batch_size': 135, 'max_epochs': 10} INFO:syne_tune.tuner:Trial trial_id 8 completed. INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.11837221527625114 --batch_size 75 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/10/checkpoints INFO:syne_tune.tuner:(trial 10) - scheduled config {'learning_rate': 0.11837221527625114, 'batch_size': 75, 'max_epochs': 10} INFO:syne_tune.tuner:Trial trial_id 9 completed. INFO:root:running subprocess with command: /home/d2l-worker/miniconda3/envs/d2l-en-release-0/bin/python /home/d2l-worker/miniconda3/envs/d2l-en-release-0/lib/python3.9/site-packages/syne_tune/backend/python_backend/python_entrypoint.py --learning_rate 0.18877290342981604 --batch_size 187 --max_epochs 10 --tune_function_root /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/tune_function --tune_function_hash 53504c42ecb95363b73ac1f849a8a245 --st_checkpoint_dir /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691/11/checkpoints INFO:syne_tune.tuner:(trial 11) - scheduled config {'learning_rate': 0.18877290342981604, 'batch_size': 187, 'max_epochs': 10} INFO:syne_tune.stopping_criterion:reaching max wallclock time (720), stopping there. INFO:syne_tune.tuner:Stopping trials that may still be running. INFO:syne_tune.tuner:Tuning finished, results of trials can be found on /home/d2l-worker/syne-tune/python-entrypoint-2023-02-10-04-56-21-691 -------------------- Resource summary (last result is reported): trial_id status iter learning_rate batch_size max_epochs epoch validation_error worker-time 0 Completed 10 0.100000 128 10 10.0 0.258109 108.366785 1 Completed 10 0.316420 52 10 10.0 0.146223 179.660365 2 Completed 10 0.045813 71 10 10.0 0.311251 143.567631 3 Completed 10 0.113754 244 10 10.0 0.336094 90.168444 4 Completed 10 0.521166 47 10 10.0 0.146092 190.111242 5 Completed 10 0.052599 181 10 10.0 0.399893 91.044401 6 Completed 10 0.090860 48 10 10.0 0.197369 172.148435 7 Completed 10 0.035428 94 10 10.0 0.414369 112.588123 8 Completed 10 0.594119 149 10 10.0 0.177609 99.182505 9 Completed 10 0.013696 135 10 10.0 0.901235 107.753385 10 InProgress 2 0.118372 75 10 2.0 0.465970 32.484881 11 InProgress 0 0.188773 187 10 - - - 2 trials running, 10 finished (10 until the end), 722.92s wallclock-time validation_error: best 0.1377706527709961 for trial-id 4 --------------------

存儲所有評估的超參數配置的日志以供進一步分析。在調整工作期間的任何時候,我們都可以輕松獲得目前獲得的結果并繪制現任軌跡。

d2l.set_figsize() tuning_experiment = load_experiment(tuner.name) tuning_experiment.plot()

WARNING:matplotlib.legend:No artists with labels found to put in legend. Note that artists whose label start with an underscore are ignored when legend() is called with no argument.

19.3.3。可視化異步優化過程

下面我們可視化每次試驗的學習曲線(圖中的每種顏色代表一次試驗)在異步優化過程中是如何演變的。在任何時間點,同時運行的試驗數量與我們的工人數量一樣多。一旦一個試驗結束,我們立即開始下一個試驗,而不是等待其他試驗完成。通過異步調度將工作人員的空閑時間減少到最低限度。

d2l.set_figsize([6, 2.5])

results = tuning_experiment.results

for trial_id in results.trial_id.unique():

df = results[results["trial_id"] == trial_id]

d2l.plt.plot(

df["st_tuner_time"],

df["validation_error"],

marker="o"

)

d2l.plt.xlabel("wall-clock time")

d2l.plt.ylabel("objective function")

Text(0, 0.5, 'objective function')

19.3.4。概括

我們可以通過跨并行資源的分布試驗大大減少隨機搜索的等待時間。一般來說,我們區分同步調度和異步調度。同步調度意味著我們在前一批完成后對新一批超參數配置進行采樣。如果我們有掉隊者——比其他試驗需要更多時間才能完成的試驗——我們的工作人員需要在同步點等待。一旦資源可用,異步調度就會評估新的超參數配置,從而確保所有工作人員在任何時間點都很忙。雖然隨機搜索易于異步分發并且不需要對實際算法進行任何更改,但其他方法需要進行一些額外的修改。

19.3.5。練習

考慮DropoutMLP在 5.6 節中實現并在 19.2 節的練習 1 中使用的模型。

hpo_objective_dropoutmlp_synetune實施要與 Syne Tune 一起使用的目標函數 。確保您的函數在每個時期后報告驗證錯誤。

使用19.2 節練習 1 的設置,將隨機搜索與貝葉斯優化進行比較。如果您使用 SageMaker,請隨意使用 Syne Tune 的基準測試工具以并行運行實驗。提示:貝葉斯優化作為syne_tune.optimizer.baselines.BayesianOptimization.

對于本練習,您需要在至少具有 4 個 CPU 內核的實例上運行。對于上面使用的方法之一(隨機搜索、貝葉斯優化),使用n_workers=1、 n_workers=2、運行實驗n_workers=4并比較結果(現任軌跡)。至少對于隨機搜索,您應該觀察到工人數量的線性比例。提示:為了獲得穩健的結果,您可能必須對每次重復多次進行平均。

進階。本練習的目標是在 Syne Tune 中實施新的調度程序。

創建一個包含 d2lbook 和 syne-tune 源的虛擬環境。

在 Syne Tune 中將第 19.2 節LocalSearcher中的練習 2 作為新的搜索器來實現。提示:閱讀 本教程。或者,您可以按照此 示例進行操作。

將您的新產品LocalSearcher與RandomSearch基準 進行比較DropoutMLP。

Discussions

-

gpu

+關注

關注

28文章

4955瀏覽量

131398 -

異步

+關注

關注

0文章

62瀏覽量

18321 -

pytorch

+關注

關注

2文章

809瀏覽量

13987

發布評論請先 登錄

怎樣使用PyTorch Hub去加載YOLOv5模型

通過Cortex來非常方便的部署PyTorch模型

PyTorch教程-10.8。波束搜索

PyTorch教程-12.4。隨機梯度下降

PyTorch教程-13.2. 異步計算

評論