作者:Siddhartha Pramanik來源:DeepHub IMBA

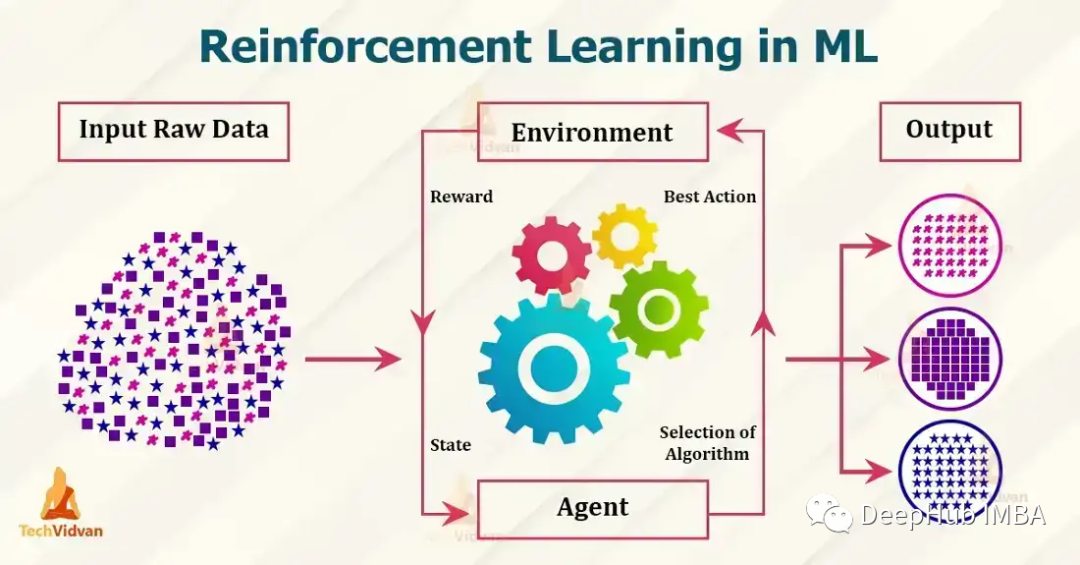

目前流行的強(qiáng)化學(xué)習(xí)算法包括 Q-learning、SARSA、DDPG、A2C、PPO、DQN 和 TRPO。這些算法已被用于在游戲、機(jī)器人和決策制定等各種應(yīng)用中,并且這些流行的算法還在不斷發(fā)展和改進(jìn),本文我們將對其做一個簡單的介紹。

1、Q-learningQ-learning:Q-learning 是一種無模型、非策略的強(qiáng)化學(xué)習(xí)算法。它使用 Bellman 方程估計最佳動作值函數(shù),該方程迭代地更新給定狀態(tài)動作對的估計值。Q-learning 以其簡單性和處理大型連續(xù)狀態(tài)空間的能力而聞名。下面是一個使用 Python 實(shí)現(xiàn) Q-learning 的簡單示例:

import numpy as np # Define the Q-table and the learning rate Q = np.zeros((state_space_size, action_space_size)) alpha = 0.1 # Define the exploration rate and discount factor epsilon = 0.1 gamma = 0.99 for episode in range(num_episodes): current_state = initial_state while not done: # Choose an action using an epsilon-greedy policy if np.random.uniform(0, 1) < epsilon: action = np.random.randint(0, action_space_size) else: action = np.argmax(Q[current_state]) # Take the action and observe the next state and reward next_state, reward, done = take_action(current_state, action) # Update the Q-table using the Bellman equation Q[current_state, action] = Q[current_state, action] + alpha * (reward + gamma * np.max(Q[next_state]) - Q[current_state, action]) current_state = next_state

上面的示例中,state_space_size 和 action_space_size 分別是環(huán)境中的狀態(tài)數(shù)和動作數(shù)。num_episodes 是要為運(yùn)行算法的輪次數(shù)。initial_state 是環(huán)境的起始狀態(tài)。take_action(current_state, action) 是一個函數(shù),它將當(dāng)前狀態(tài)和一個動作作為輸入,并返回下一個狀態(tài)、獎勵和一個指示輪次是否完成的布爾值。

在 while 循環(huán)中,使用 epsilon-greedy 策略根據(jù)當(dāng)前狀態(tài)選擇一個動作。使用概率 epsilon選擇一個隨機(jī)動作,使用概率 1-epsilon選擇對當(dāng)前狀態(tài)具有最高 Q 值的動作。采取行動后,觀察下一個狀態(tài)和獎勵,使用Bellman方程更新q。并將當(dāng)前狀態(tài)更新為下一個狀態(tài)。這只是 Q-learning 的一個簡單示例,并未考慮 Q-table 的初始化和要解決的問題的具體細(xì)節(jié)。

2、SARSASARSA:SARSA 是一種無模型、基于策略的強(qiáng)化學(xué)習(xí)算法。它也使用Bellman方程來估計動作價值函數(shù),但它是基于下一個動作的期望值,而不是像 Q-learning 中的最優(yōu)動作。SARSA 以其處理隨機(jī)動力學(xué)問題的能力而聞名。

import numpy as np # Define the Q-table and the learning rate Q = np.zeros((state_space_size, action_space_size)) alpha = 0.1 # Define the exploration rate and discount factor epsilon = 0.1 gamma = 0.99 for episode in range(num_episodes): current_state = initial_state action = epsilon_greedy_policy(epsilon, Q, current_state) while not done: # Take the action and observe the next state and reward next_state, reward, done = take_action(current_state, action) # Choose next action using epsilon-greedy policy next_action = epsilon_greedy_policy(epsilon, Q, next_state) # Update the Q-table using the Bellman equation Q[current_state, action] = Q[current_state, action] + alpha * (reward + gamma * Q[next_state, next_action] - Q[current_state, action]) current_state = next_state action = next_action

state_space_size和action_space_size分別是環(huán)境中的狀態(tài)和操作的數(shù)量。num_episodes是您想要運(yùn)行SARSA算法的輪次數(shù)。Initial_state是環(huán)境的初始狀態(tài)。take_action(current_state, action)是一個將當(dāng)前狀態(tài)和作為操作輸入的函數(shù),并返回下一個狀態(tài)、獎勵和一個指示情節(jié)是否完成的布爾值。

在while循環(huán)中,使用在單獨(dú)的函數(shù)epsilon_greedy_policy(epsilon, Q, current_state)中定義的epsilon-greedy策略來根據(jù)當(dāng)前狀態(tài)選擇操作。使用概率 epsilon選擇一個隨機(jī)動作,使用概率 1-epsilon對當(dāng)前狀態(tài)具有最高 Q 值的動作。上面與Q-learning相同,但是采取了一個行動后,在觀察下一個狀態(tài)和獎勵時它然后使用貪心策略選擇下一個行動。并使用Bellman方程更新q表。

3、DDPGDDPG 是一種用于連續(xù)動作空間的無模型、非策略算法。它是一種actor-critic算法,其中actor網(wǎng)絡(luò)用于選擇動作,而critic網(wǎng)絡(luò)用于評估動作。DDPG 對于機(jī)器人控制和其他連續(xù)控制任務(wù)特別有用。

import numpy as np from keras.models import Model, Sequential from keras.layers import Dense, Input from keras.optimizers import Adam # Define the actor and critic models actor = Sequential() actor.add(Dense(32, input_dim=state_space_size, activation='relu')) actor.add(Dense(32, activation='relu')) actor.add(Dense(action_space_size, activation='tanh')) actor.compile(loss='mse', optimizer=Adam(lr=0.001)) critic = Sequential() critic.add(Dense(32, input_dim=state_space_size, activation='relu')) critic.add(Dense(32, activation='relu')) critic.add(Dense(1, activation='linear')) critic.compile(loss='mse', optimizer=Adam(lr=0.001)) # Define the replay buffer replay_buffer = [] # Define the exploration noise exploration_noise = OrnsteinUhlenbeckProcess(size=action_space_size, theta=0.15, mu=0, sigma=0.2) for episode in range(num_episodes): current_state = initial_state while not done: # Select an action using the actor model and add exploration noise action = actor.predict(current_state)[0] + exploration_noise.sample() action = np.clip(action, -1, 1) # Take the action and observe the next state and reward next_state, reward, done = take_action(current_state, action) # Add the experience to the replay buffer replay_buffer.append((current_state, action, reward, next_state, done)) # Sample a batch of experiences from the replay buffer batch = sample(replay_buffer, batch_size) # Update the critic model states = np.array([x[0] for x in batch]) actions = np.array([x[1] for x in batch]) rewards = np.array([x[2] for x in batch]) next_states = np.array([x[3] for x in batch]) target_q_values = rewards + gamma * critic.predict(next_states) critic.train_on_batch(states, target_q_values) # Update the actor model action_gradients = np.array(critic.get_gradients(states, actions)) actor.train_on_batch(states, action_gradients) current_state = next_state

在本例中,state_space_size和action_space_size分別是環(huán)境中的狀態(tài)和操作的數(shù)量。num_episodes是輪次數(shù)。Initial_state是環(huán)境的初始狀態(tài)。Take_action (current_state, action)是一個函數(shù),它接受當(dāng)前狀態(tài)和操作作為輸入,并返回下一個操作。

4、A2CA2C(Advantage Actor-Critic)是一種有策略的actor-critic算法,它使用Advantage函數(shù)來更新策略。該算法實(shí)現(xiàn)簡單,可以處理離散和連續(xù)的動作空間。

import numpy as np from keras.models import Model, Sequential from keras.layers import Dense, Input from keras.optimizers import Adam from keras.utils import to_categorical # Define the actor and critic models state_input = Input(shape=(state_space_size,)) actor = Dense(32, activation='relu')(state_input) actor = Dense(32, activation='relu')(actor) actor = Dense(action_space_size, activation='softmax')(actor) actor_model = Model(inputs=state_input, outputs=actor) actor_model.compile(loss='categorical_crossentropy', optimizer=Adam(lr=0.001)) state_input = Input(shape=(state_space_size,)) critic = Dense(32, activation='relu')(state_input) critic = Dense(32, activation='relu')(critic) critic = Dense(1, activation='linear')(critic) critic_model = Model(inputs=state_input, outputs=critic) critic_model.compile(loss='mse', optimizer=Adam(lr=0.001)) for episode in range(num_episodes): current_state = initial_state done = False while not done: # Select an action using the actor model and add exploration noise action_probs = actor_model.predict(np.array([current_state]))[0] action = np.random.choice(range(action_space_size), p=action_probs) # Take the action and observe the next state and reward next_state, reward, done = take_action(current_state, action) # Calculate the advantage target_value = critic_model.predict(np.array([next_state]))[0][0] advantage = reward + gamma * target_value - critic_model.predict(np.array([current_state]))[0][0] # Update the actor model action_one_hot = to_categorical(action, action_space_size) actor_model.train_on_batch(np.array([current_state]), advantage * action_one_hot) # Update the critic model critic_model.train_on_batch(np.array([current_state]), reward + gamma * target_value) current_state = next_state

在這個例子中,actor模型是一個神經(jīng)網(wǎng)絡(luò),它有2個隱藏層,每個隱藏層有32個神經(jīng)元,具有relu激活函數(shù),輸出層具有softmax激活函數(shù)。critic模型也是一個神經(jīng)網(wǎng)絡(luò),它有2個隱含層,每層32個神經(jīng)元,具有relu激活函數(shù),輸出層具有線性激活函數(shù)。使用分類交叉熵?fù)p失函數(shù)訓(xùn)練actor模型,使用均方誤差損失函數(shù)訓(xùn)練critic模型。動作是根據(jù)actor模型預(yù)測選擇的,并添加了用于探索的噪聲。

5、PPOPPO(Proximal Policy Optimization)是一種策略算法,它使用信任域優(yōu)化的方法來更新策略。它在具有高維觀察和連續(xù)動作空間的環(huán)境中特別有用。PPO 以其穩(wěn)定性和高樣品效率而著稱。

import numpy as np from keras.models import Model, Sequential from keras.layers import Dense, Input from keras.optimizers import Adam # Define the policy model state_input = Input(shape=(state_space_size,)) policy = Dense(32, activation='relu')(state_input) policy = Dense(32, activation='relu')(policy) policy = Dense(action_space_size, activation='softmax')(policy) policy_model = Model(inputs=state_input, outputs=policy) # Define the value model value_model = Model(inputs=state_input, outputs=Dense(1, activation='linear')(policy)) # Define the optimizer optimizer = Adam(lr=0.001) for episode in range(num_episodes): current_state = initial_state while not done: # Select an action using the policy model action_probs = policy_model.predict(np.array([current_state]))[0] action = np.random.choice(range(action_space_size), p=action_probs) # Take the action and observe the next state and reward next_state, reward, done = take_action(current_state, action) # Calculate the advantage target_value = value_model.predict(np.array([next_state]))[0][0] advantage = reward + gamma * target_value - value_model.predict(np.array([current_state]))[0][0] # Calculate the old and new policy probabilities old_policy_prob = action_probs[action] new_policy_prob = policy_model.predict(np.array([next_state]))[0][action] # Calculate the ratio and the surrogate loss ratio = new_policy_prob / old_policy_prob surrogate_loss = np.minimum(ratio * advantage, np.clip(ratio, 1 - epsilon, 1 + epsilon) * advantage) # Update the policy and value models policy_model.trainable_weights = value_model.trainable_weights policy_model.compile(optimizer=optimizer, loss=-surrogate_loss) policy_model.train_on_batch(np.array([current_state]), np.array([action_one_hot])) value_model.train_on_batch(np.array([current_state]), reward + gamma * target_value) current_state = next_state

6、DQNDQN(深度 Q 網(wǎng)絡(luò))是一種無模型、非策略算法,它使用神經(jīng)網(wǎng)絡(luò)來逼近 Q 函數(shù)。DQN 特別適用于 Atari 游戲和其他類似問題,其中狀態(tài)空間是高維的,并使用神經(jīng)網(wǎng)絡(luò)近似 Q 函數(shù)。

import numpy as np from keras.models import Sequential from keras.layers import Dense, Input from keras.optimizers import Adam from collections import deque # Define the Q-network model model = Sequential() model.add(Dense(32, input_dim=state_space_size, activation='relu')) model.add(Dense(32, activation='relu')) model.add(Dense(action_space_size, activation='linear')) model.compile(loss='mse', optimizer=Adam(lr=0.001)) # Define the replay buffer replay_buffer = deque(maxlen=replay_buffer_size) for episode in range(num_episodes): current_state = initial_state while not done: # Select an action using an epsilon-greedy policy if np.random.rand() < epsilon: action = np.random.randint(0, action_space_size) else: action = np.argmax(model.predict(np.array([current_state]))[0]) # Take the action and observe the next state and reward next_state, reward, done = take_action(current_state, action) # Add the experience to the replay buffer replay_buffer.append((current_state, action, reward, next_state, done)) # Sample a batch of experiences from the replay buffer batch = random.sample(replay_buffer, batch_size) # Prepare the inputs and targets for the Q-network inputs = np.array([x[0] for x in batch]) targets = model.predict(inputs) for i, (state, action, reward, next_state, done) in enumerate(batch): if done: targets[i, action] = reward else: targets[i, action] = reward + gamma * np.max(model.predict(np.array([next_state]))[0]) # Update the Q-network model.train_on_batch(inputs, targets) current_state = next_state

上面的代碼,Q-network有2個隱藏層,每個隱藏層有32個神經(jīng)元,使用relu激活函數(shù)。該網(wǎng)絡(luò)使用均方誤差損失函數(shù)和Adam優(yōu)化器進(jìn)行訓(xùn)練。

7、TRPOTRPO (Trust Region Policy Optimization)是一種無模型的策略算法,它使用信任域優(yōu)化方法來更新策略。它在具有高維觀察和連續(xù)動作空間的環(huán)境中特別有用。TRPO 是一個復(fù)雜的算法,需要多個步驟和組件來實(shí)現(xiàn)。TRPO不是用幾行代碼就能實(shí)現(xiàn)的簡單算法。所以我們這里使用實(shí)現(xiàn)了TRPO的現(xiàn)有庫,例如OpenAI Baselines,它提供了包括TRPO在內(nèi)的各種預(yù)先實(shí)現(xiàn)的強(qiáng)化學(xué)習(xí)算法,。要在OpenAI Baselines中使用TRPO,我們需要安裝:

pip install baselines

然后可以使用baselines庫中的trpo_mpi模塊在你的環(huán)境中訓(xùn)練TRPO代理,這里有一個簡單的例子:

import gym from baselines.common.vec_env.dummy_vec_env import DummyVecEnv from baselines.trpo_mpi import trpo_mpi #Initialize the environment env = gym.make("CartPole-v1") env = DummyVecEnv([lambda: env]) # Define the policy network policy_fn = mlp_policy #Train the TRPO model model = trpo_mpi.learn(env, policy_fn, max_iters=1000)

我們使用Gym庫初始化環(huán)境。然后定義策略網(wǎng)絡(luò),并調(diào)用TRPO模塊中的learn()函數(shù)來訓(xùn)練模型。還有許多其他庫也提供了TRPO的實(shí)現(xiàn),例如TensorFlow、PyTorch和RLLib。下面時一個使用TF 2.0實(shí)現(xiàn)的樣例:

import tensorflow as tf import gym # Define the policy network class PolicyNetwork(tf.keras.Model): def __init__(self): super(PolicyNetwork, self).__init__() self.dense1 = tf.keras.layers.Dense(16, activation='relu') self.dense2 = tf.keras.layers.Dense(16, activation='relu') self.dense3 = tf.keras.layers.Dense(1, activation='sigmoid') def call(self, inputs): x = self.dense1(inputs) x = self.dense2(x) x = self.dense3(x) return x # Initialize the environment env = gym.make("CartPole-v1") # Initialize the policy network policy_network = PolicyNetwork() # Define the optimizer optimizer = tf.optimizers.Adam() # Define the loss function loss_fn = tf.losses.BinaryCrossentropy() # Set the maximum number of iterations max_iters = 1000 # Start the training loop for i in range(max_iters): # Sample an action from the policy network action = tf.squeeze(tf.random.categorical(policy_network(observation), 1)) # Take a step in the environment observation, reward, done, _ = env.step(action) with tf.GradientTape() as tape: # Compute the loss loss = loss_fn(reward, policy_network(observation)) # Compute the gradients grads = tape.gradient(loss, policy_network.trainable_variables) # Perform the update step optimizer.apply_gradients(zip(grads, policy_network.trainable_variables)) if done: # Reset the environment observation = env.reset()

在這個例子中,我們首先使用TensorFlow的Keras API定義一個策略網(wǎng)絡(luò)。然后使用Gym庫和策略網(wǎng)絡(luò)初始化環(huán)境。然后定義用于訓(xùn)練策略網(wǎng)絡(luò)的優(yōu)化器和損失函數(shù)。在訓(xùn)練循環(huán)中,從策略網(wǎng)絡(luò)中采樣一個動作,在環(huán)境中前進(jìn)一步,然后使用TensorFlow的GradientTape計算損失和梯度。然后我們使用優(yōu)化器執(zhí)行更新步驟。這是一個簡單的例子,只展示了如何在TensorFlow 2.0中實(shí)現(xiàn)TRPO。TRPO是一個非常復(fù)雜的算法,這個例子沒有涵蓋所有的細(xì)節(jié),但它是試驗TRPO的一個很好的起點(diǎn)。

總結(jié)

以上就是我們總結(jié)的7個常用的強(qiáng)化學(xué)習(xí)算法,這些算法并不相互排斥,通常與其他技術(shù)(如值函數(shù)逼近、基于模型的方法和集成方法)結(jié)合使用,可以獲得更好的結(jié)果。

-

人工智能

+關(guān)注

關(guān)注

1807文章

49050瀏覽量

250001 -

強(qiáng)化學(xué)習(xí)

+關(guān)注

關(guān)注

4文章

269瀏覽量

11615

發(fā)布評論請先 登錄

NVIDIA Isaac Lab可用環(huán)境與強(qiáng)化學(xué)習(xí)腳本使用指南

【書籍評測活動NO.62】一本書讀懂 DeepSeek 全家桶核心技術(shù):DeepSeek 核心技術(shù)揭秘

18個常用的強(qiáng)化學(xué)習(xí)算法整理:從基礎(chǔ)方法到高級模型的理論技術(shù)與代碼實(shí)現(xiàn)

基于RV1126開發(fā)板實(shí)現(xiàn)自學(xué)習(xí)圖像分類方案

詳解RAD端到端強(qiáng)化學(xué)習(xí)后訓(xùn)練范式

淺談適用規(guī)模充電站的深度學(xué)習(xí)有序充電策略

一個月速成python+OpenCV圖像處理

評論