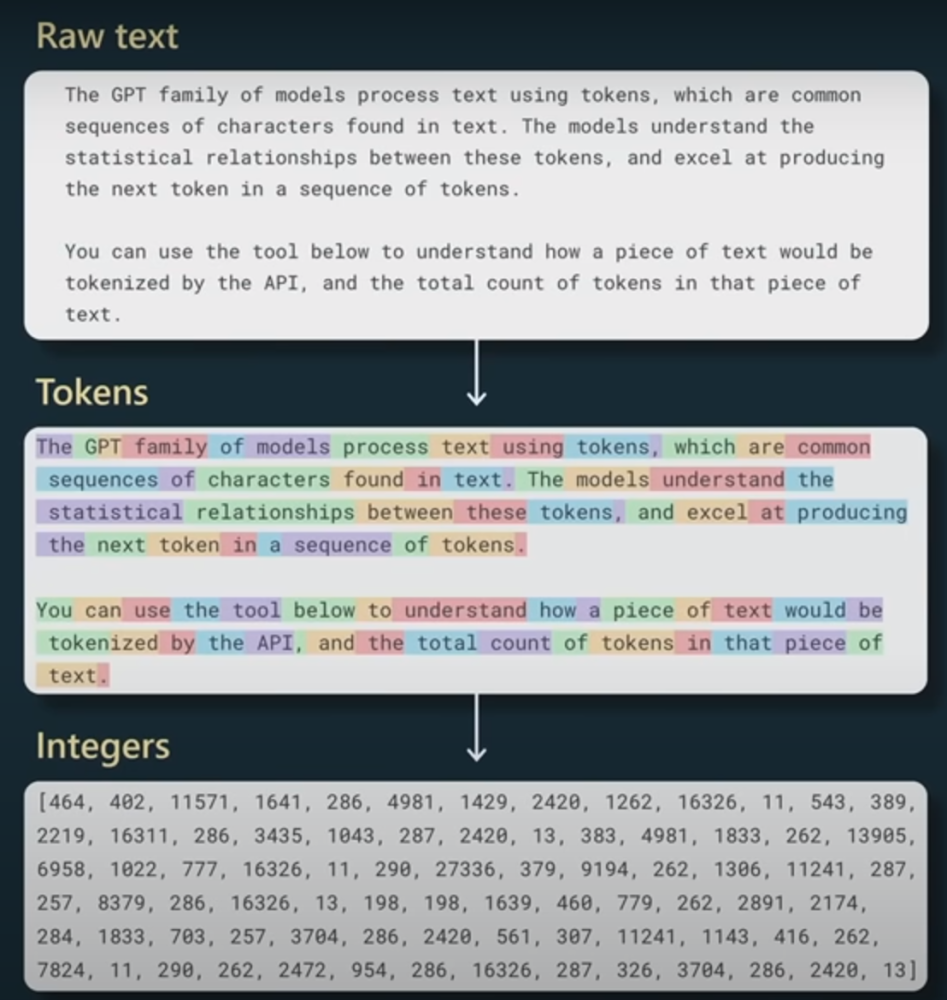

背景介紹

拒絕采樣是一種蒙特卡洛算法,用于借助代理分布從一個復雜的(“難以采樣的”)分布中采樣數據。

什么是蒙特卡洛?如果一個方法/算法使用隨機數來解決問題,那么它被歸類為蒙特卡洛方法。在拒絕采樣的背景下,蒙特卡洛(也稱為隨機性)幫助實施算法中的標準。關于采樣,幾乎所有蒙特卡洛方法中存在的一個核心思想是,如果你不能從你的目標分布函數中采樣,那么使用另一個分布函數(因此被稱為提議函數)。

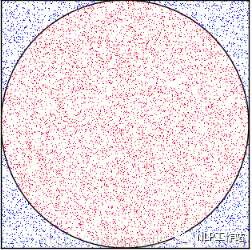

上圖利用蒙特卡洛算法通過對矩形進行投針實驗,通過落在圓里面的頻率來估計圓的面積和「π值」

然而,采樣程序必須“遵循目標分布”。遵循“目標分布”意味著我們應該根據它們發生的可能性得到若干樣本。簡單來說,高概率區域的樣本應該更多。

這也意味著,當我們使用一個提議函數時,我們必須引入必要的修正,以確保我們的采樣程序遵循目標分布函數!這種“修正”方面然后采取接受標準的形式。

這個方法背后的主要思想是:如果我們試圖從分布p(x)中取樣,我們會使用另一個工具分布q(x)來幫助從p(x)中取樣。唯一的限制是對于某個M>1,p(x) < Mq(x)。它主要用于當p(x)的形式使其難以直接取樣,但可以在任何點x評估它的情況。

以下是算法的細分:

- 從q(x)中取樣x。

- 從U(0, Mq(x))(均勻分布)中取樣y。

- 如果 y < p(x),則接受x作為p(x)的一個樣本,否則返回第1步。

這個方法之所以有效,是因為均勻分布幫助我們將Mq(x)提供的“封包”縮放到p(x)的概率密度函數。另一種看法是,我們取樣點x0的概率。這與從g中取樣x0的概率成正比,我們接受的次數的比例,僅僅由p(x0)和Mq(x0)之間的比率給出。

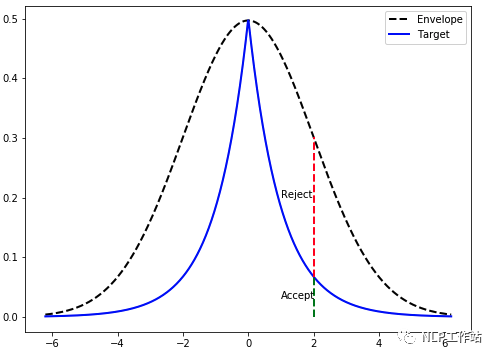

上圖,一旦我們找到了q(x)的一個樣本(在這個例子中,x=2),我們就會從一個均勻分布中取樣,其范圍等于Mq(x)的高度。如果它在目標概率密度函數的高度之內,我們就接受它(綠色表示);否則,我們就拒絕它。

結合我們這里的生成模型背景,我們這里提到的拒絕采樣微調通常是說在一個微調過的模型基礎上面(可能是SFT微調也可能是經過PPO算法微調等)進行K個樣本采樣。然后我們有一個拒絕或者接受函數來對模型采樣生成的樣本進行過濾篩選出符合我們目標分布的樣本,再進行模型微調。

相關研究

拒絕抽樣是一種簡單而有效的微調增強技術,也用于LLM與人類偏好的對齊。

WebGPT: Browser-assisted question-answering with human feedback

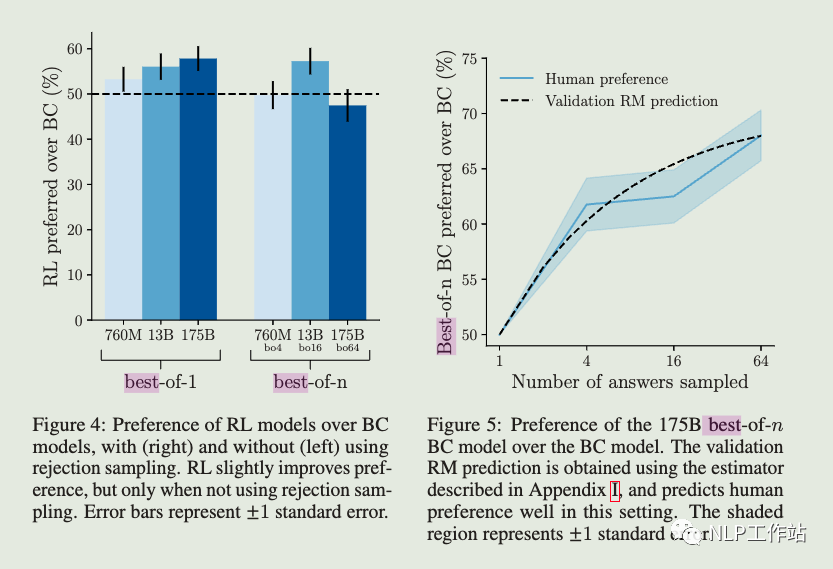

Rejection sampling (best-of-n). We sampled a fixed number of answers (4, 16 or 64) from either the BC model or the RL model (if left unspecified, we used the BC model), and selected the one that was ranked highest by the reward model. We used this as an alternative method of optimizing against the reward model, which requires no additional training, but instead uses more inference-time compute.

Even though both rejection sampling and RL optimize against the same reward model, there are several possible reasons why rejection sampling outperforms RL:

- 1.It may help to have many answering attempts, simply to make use of more inference-time compute.

- 2.The environment is unpredictable: with rejection sampling, the model can try visiting many more websites, and then evaluate the information it finds with the benefit of hindsight.

- 3.The reward model was trained primarily on data collected from BC and rejection sampling policies, which may have made it more robust to over optimization by rejection sampling than by RL.

- 4..The reward model was trained primarily on data collected from BC and rejection sampling policies, which may have made it more robust to over optimization by rejection sampling than by RL.

簡單來說webgpt只是在推理階段使用拒絕采樣,并沒有使用拒絕采樣進行微調。然后作者比較了RL和拒絕采樣的效果,發現拒絕采樣會更好,并且給出了一些解釋:比較認同的是拒絕采樣比起RL算法來說不需要調參,更加魯棒。

Training a Helpful and Harmless Assistant with Reinforcement Learning from Human Feedback

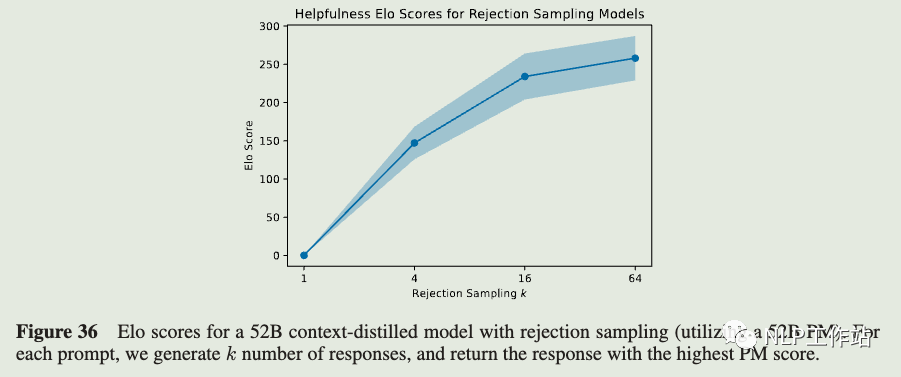

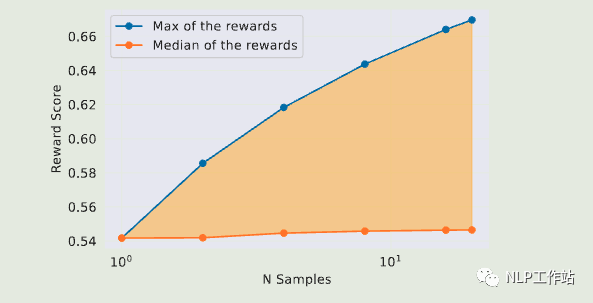

Rejection Sampling (RS) with a 52B preference model, where samples were generated from a 52B context-distilled LM. In this case the number k of samples was a parameter, but most often we used k = 16.

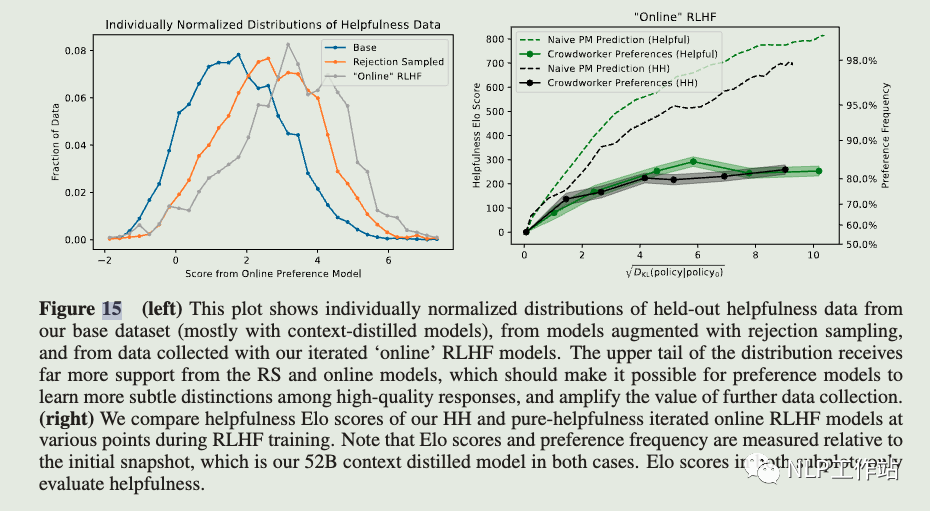

We also test our online models' performance during training (Figure 15), compare various levels of rejection sampling .

In Figure 36 we show helpfulness Elo scores for a 52B context distilled model with rejection sampling (utilizing a 52B preference model trained on pure helpfulness) for k = 1, 4, 16, 64, showing that higher values of k clearly perform better. Note that the context distilled model and the preference models discussed here were trained during an earlier stage of our research with different datasets and settings from those discussed elsewhere in the paper, so they are not directly comparable with other Elo results, though very roughly and heuristically, our online models seem to perform about as well or better than k = 64 rejection sampling. Note that k = 64 rejection sampling corresponds to DKL = log(64) ≈ 4.2.

總結一下依然是在推理階段使用拒絕采樣,然后采樣的時候K值越大效果越好,online RLHF 模型似乎表現的比拒絕采樣更好。

Aligning Large Language Models through Synthetic Feedback

An important additional component is that we leverage the synthetic RM from the previous stage to ensure the quality of the model-tomodel conversations with rejection sampling over the generated outputs (Ouyang et al., 2022). We train LLaMA-7B on the synthetic demonstrations (SFT) and further optimize the model with rewards from the synthetic RM, namely, Reinforcement Learning from Synthetic Feedback (RLSF).

To ensure a more aligned response from the assistant, we suggest including the synthetic RM, trained in the first stage, in the loop, namely Reward-Model-guided SelfPlay (RMSP). In this setup, the assistant model,LLaMA-30B-Faithful-3shot, first samples N responses for a given conversational context. Then, the RM scores the N responses, and the best-scored response is chosen as the final response for the simulation, i.e., the RM performs rejection sampling (best-of-N sampling) (Nakano et al., 2021; Ouyang et al., 2022). Other procedures are the same as the Self-Play. Please see Figure 8 for the examples.

與前兩篇文章不同的是這里使用拒絕采樣得到的數據進行微調了,利用ICL生成不同級別模型對prompt的response,然后前提假設大模型對回答效果好于小模型,得到偏好數據訓練得到RM模型。然后使用拒絕采樣,使用RM模型選出分數最高的response得到訓練集,使用SFT訓練模型。

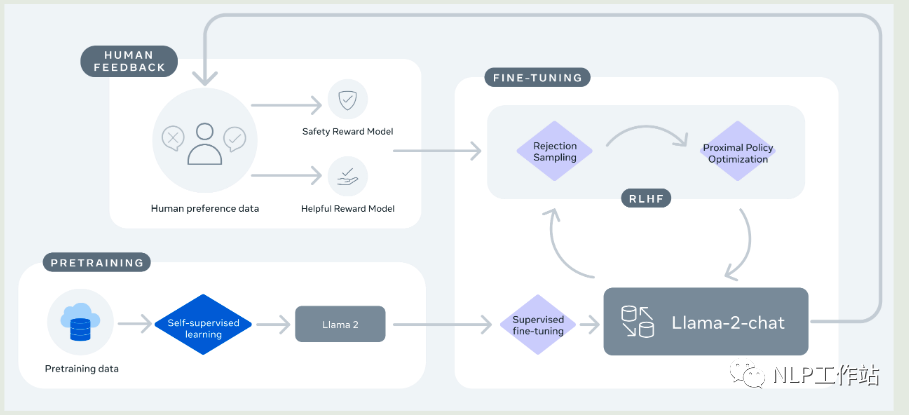

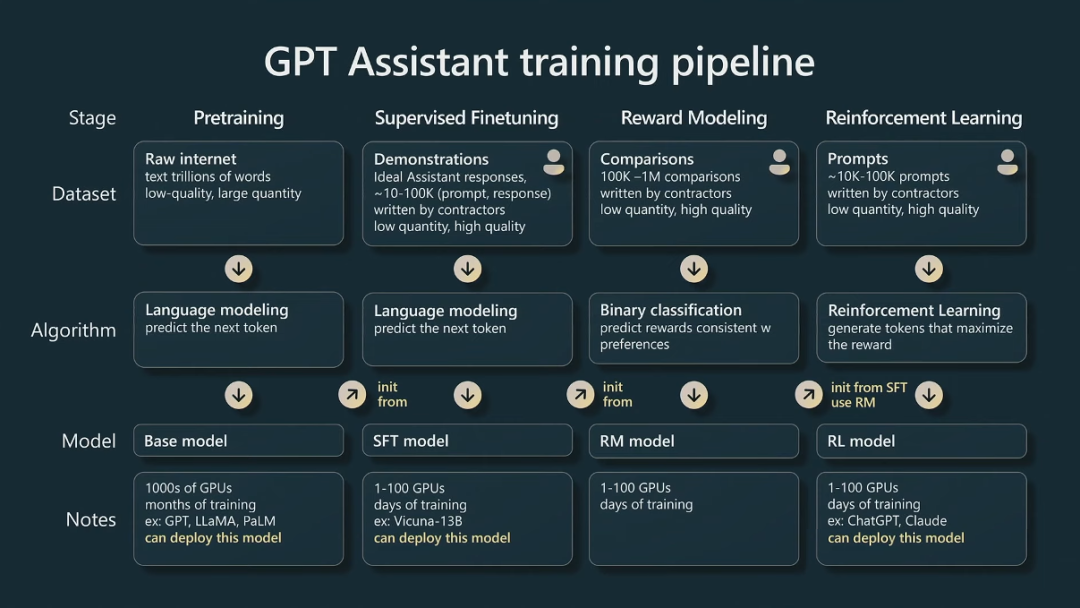

Llama 2: Open Foundation and Fine-Tuned Chat Models

This process begins with the pretraining of Llama 2 using publicly available online sources. Following this, we create an initial version of Llama 2-Chat through the application of supervised fine-tuning. Subsequently, the model is iteratively refined using Reinforcement Learning with Human Feedback (RLHF) methodologies, specifically through rejection sampling and Proximal Policy Optimization (PPO). Throughout the RLHF stage, the accumulation of iterative reward modeling data in parallel with model enhancements is crucial to ensure the reward models remain within distribution.

Rejection Sampling fine-tuning. We sample K outputs from the model and select the best candidate with our reward, consistent with Bai et al. (2022b). The same re-ranking strategy for LLMs was also proposed in Deng et al. (2019), where the reward is seen as an energy function. Here, we go one step further, and use the selected outputs for a gradient update. For each prompt, the sample obtaining the highest reward score is considered the new gold standard. Similar to Scialom et al. (2020a), we then fine-tune our model on the new set of ranked samples, reinforcing the reward.

The two RL algorithms mainly differ in:

- Breadth — in Rejection Sampling, the model explores K samples for a given prompt, while only one generation is done for PPO.

- Depth — in PPO, during training at step t the sample is a function of the updated model policy fromt ? 1 after the gradient update of the previous step. In Rejection Sampling fine-tuning, we sample all the outputs given the initial policy of our model to collect a new dataset, before applying the fine-tuning similar to SFT. However, since we applied iterative model updates, the fundamental differences between the two RL algorithms are less pronounced.

總結一下使用的RLHF基準是PPO和拒絕采樣(RS)微調(類似于N次采樣中的最佳值)。PPO是最受歡迎 on policy RL算法(可以說是試錯學習)。這里重點提到了Here, we go one step further, and use the selected outputs for a gradient update. For each prompt, the sample obtaining the highest reward score is considered the new gold standard. Similar to Scialom et al. (2020a), we then fine-tune our model on the new set of ranked samples, reinforcing the reward.

說明了llama用rm進行拒絕采樣生成的樣本進行了SFT訓練,更新策略模型的梯度,同時,他們還將拒絕采樣生成的樣本作為gold 在舊的checkpoint上面重新訓練RM模型,加強rm模型獎勵。所以筆者認為這里的拒絕采樣微調是同時對SFT和RM模型進行微調迭代。

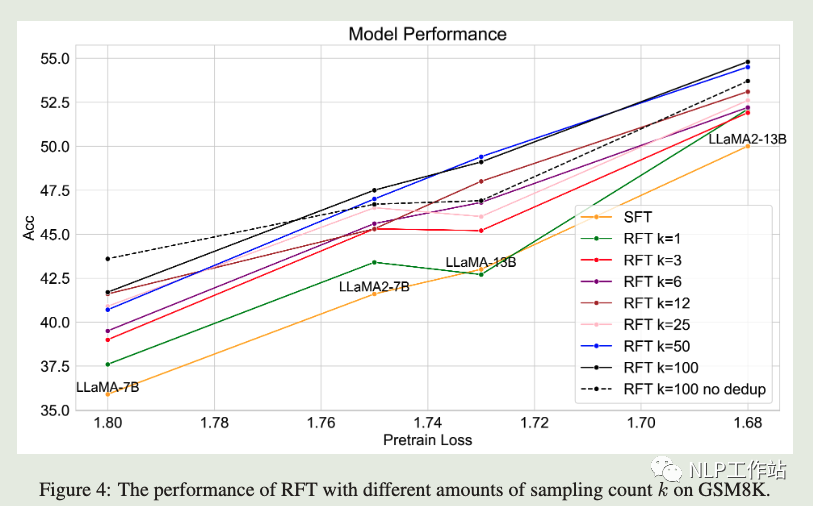

SCALING RELATIONSHIP ON LEARNING MATHEMATI-CAL REASONING WITH LARGE LANGUAGE MODELS

To augment more data samples for improving model performances without any human effort, we propose to apply Rejection sampling Fine-Tuning (RFT). RFT uses supervised models to generate and collect correct reasoning paths as augmented fine-tuning datasets. We find with augmented samples containing more distinct reasoning paths, RFT improves mathematical reasoning performance more for LLMs. We also find RFT brings more improvement for less performant LLMs. Furthermore, we combine rejection samples from multiple models which push LLaMA-7B to an accuracy of 49.3% and outperforms the supervised fine-tuning (SFT) accuracy of 35.9% significantly.

圖中相比SFT模型RFT模型效果在GSM8k上面提升明顯

總的來說了在沒有任何人力的情況下增加更多數據樣本以提高模型性能,我們建議應用拒絕采樣微調 (RFT)。RFT 使用監督模型生成和收集正確的推理路徑作為增強微調數據集。我們發現使用包含更多不同推理路徑的增強樣本,RFT 對 LLM 提高了數學推理性能。我們還發現 RFT 為性能較低的 LLM 帶來了更多改進。此外,我們結合了來自多個模型的拒絕樣本,將 LLAMA-7B 推向 49.3% 的準確率,并且顯著優于 35.9% 的監督微調 (SFT) 準確度。值得注意的上不同于上面使用的是RM模型來執行拒絕采樣選出最好的response,這里直接使用的模型reponse給出答案和正確的答案比較,選出推理正確的結果。

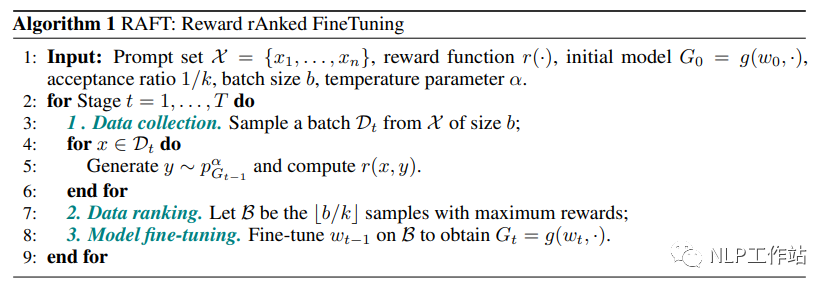

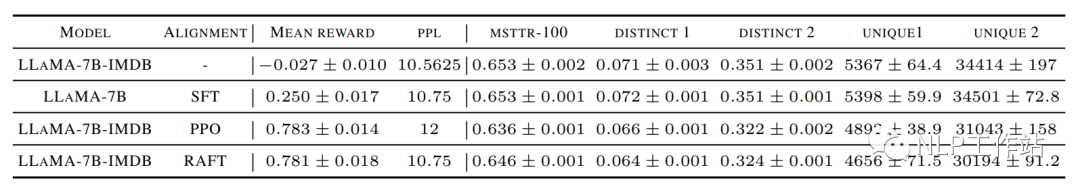

RAFT: Reward rAnked FineTuning for Generative Foundation Model Alignment

However, the inefficiencies and instabilities associated with RL algorithms frequently present substantial obstacles to the successful alignment of generative models, necessitating the development of a more robust and streamlined approach. To this end, we introduce a new framework, Reward rAnked FineTuning (RAFT), designed to align generative models more effectively. Utilizing a reward model and a sufficient number of samples, our approach selects the high-quality samples, discarding those that exhibit undesired behavior, and subsequently assembles a streaming dataset. This dataset serves as the basis for aligning the generative model and can be employed under both offline and online settings. Notably, the sample generation process within RAFT is gradient-free, rendering it compatible with black-box generators. Through extensive experiments, we demonstrate that our proposed algorithm exhibits strong performance in the context of both large language models and diffusion models.

總結與思考

拒絕采樣使得SFT模型輸出的結果分布通過拒絕/接受函數篩選(這里可以是獎勵模型也可以是啟發式規則),得到了高質量回答的分布。提高了最終返回的效果。對于拒絕采樣來說采樣的樣本K越大越好。同時在RLHF框架里面,使用拒絕采樣微調一是可以用來更新SFT模型的效果,對于ppo算法來說,往往需要保證舊的策略和新的策略分布差距比較小,所以這里提高PPO啟動的SFT模型效果對于PPO算法本身來說也很重要,其次還可以利用拒絕采樣的樣本微調來迭代舊的獎勵模型,加強模型的獎勵。這個對于提高PPO最終效果和迭代也十分重要。同時針對COT能力來說,拒絕采樣提供了更多的推理路徑來供模型學習。這對于模型來說也非常重要。

-

算法

+關注

關注

23文章

4615瀏覽量

92978 -

大模型

+關注

關注

2文章

2476瀏覽量

2788 -

LLM

+關注

關注

0文章

290瀏覽量

351

原文標題:LLM大模型訓練Trick系列之拒絕采樣

文章出處:【微信號:zenRRan,微信公眾號:深度學習自然語言處理】歡迎添加關注!文章轉載請注明出處。

發布評論請先 登錄

相關推薦

大型語言模型(LLM)的自定義訓練:包含代碼示例的詳細指南

大語言模型(LLM)預訓練數據集調研分析

從原理到代碼理解語言模型訓練和推理,通俗易懂,快速修煉LLM

2023年LLM大模型研究進展

基于NVIDIA Megatron Core的MOE LLM實現和訓練優化

llm模型和chatGPT的區別

llm模型有哪些格式

llm模型訓練一般用什么系統

LLM預訓練的基本概念、基本原理和主要優勢

端到端InfiniBand網絡解決LLM訓練瓶頸

LLM大模型訓練Trick系列之拒絕采樣

LLM大模型訓練Trick系列之拒絕采樣

評論